Using ISTIO to run parallel environments in Stage and Canary Deployments in Production at Halodoc

Introduction

When organizations switch to microservices-based architectures, managing and monitoring the ever-increasing complexity of service interactions becomes a significant problem. Here comes Istio, a service mesh that offers powerful observability and traffic management features to developers and SRE. The rapid expansion of start-ups like Halodoc might be slowed down by not addressing issues like lack of a canary deployment and the Blue Green strategy to deploying microservices which we are going to dive deep into this topic in this blog post.

But did you know that Istio can also be used to serve multiple lower environments on a single platform? Yes, it is technically feasible to deploy two versions of a microservice on the same platform using shared AWS resources, and this allowed us to shutdown a complete dedicated lower environments having all the services (like UAT, DEV and PreProd), while saving infra cost and providing the same functionality on a single platform. To understand in detail, hold your breath and continue ahead.

What is Istio?

Istio is a service mesh, which is a specific type of network infrastructure layer that regulates communication between services. With the help of this technique, many components of an application can communicate with one another. Service meshes frequently coexist with containers, microservices, and cloud-based systems.

The delivery of service requests in an application is managed by a service mesh. Service discovery, load balancing, encryption, and failure recovery are typical functions offered by a service mesh. High availability is also frequently achieved by using software that is managed by APIs rather than hardware. Service meshes can make service-to-service communication fast, reliable and secure. It offers a solution to the challenges of microservice-based systems like:

- Traffic management: Traffic Routing, Retries, Timeouts and Load balancing.

- Security: Authentication and Authorization of end users.

- Observability: Logging, Tracing and Monitoring.

Istio is built on Kubernetes and is implemented as a sidecar pattern. This means that each microservice will have its own sidecar proxy that handles all network traffic to and from the service.

Istio provides tools like Istioctl and Pilot that can be used to manage and monitor the platform. This will be more clear by seeing the following architecture.

Lets understand the Istio specific terminologies:

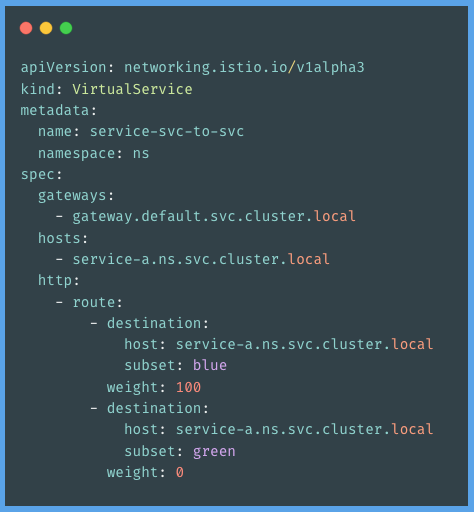

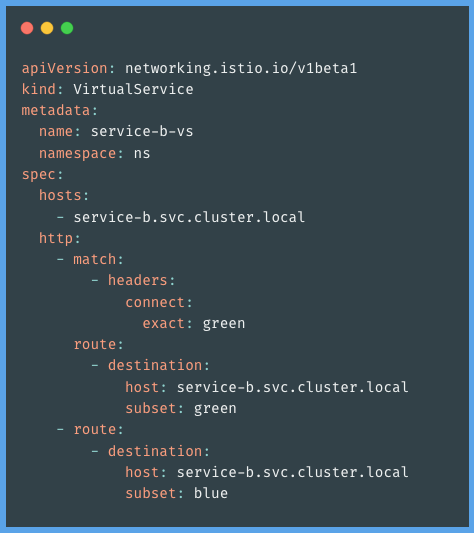

Virtual Service

A VirtualService specifies a set of traffic routing rules that will be used. Each routing rule defines the requirements for the traffic of a particular protocol that must match. When a match is made, the traffic is routed to one of the identified destination services (or a subset or version of it) listed in the registry. Let's have a look at following example to have more understanding:

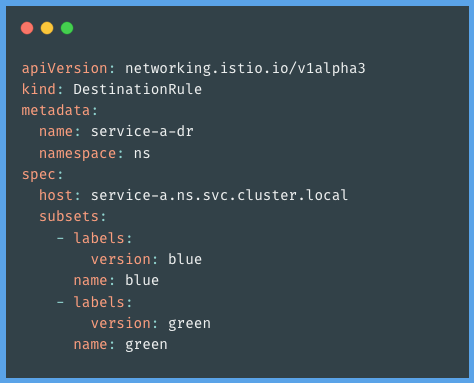

Destination Rules

In Istio, traffic routing relies heavily on destination rules. They are regulations that are applied to traffic after it has been forwarded by a virtual service to a particular location. Destination rules provide available subsets of the service to send the traffic to, whereas a virtual service matches a rule and assesses a destination to route the traffic to.

For instance, we can construct routes to several versions of a service that is operating simultaneously by creating destination rules. Then utilize virtual services to split a portion of the traffic across several versions or map to a specific subset specified by the destination rules. Below is an example of destination rules.

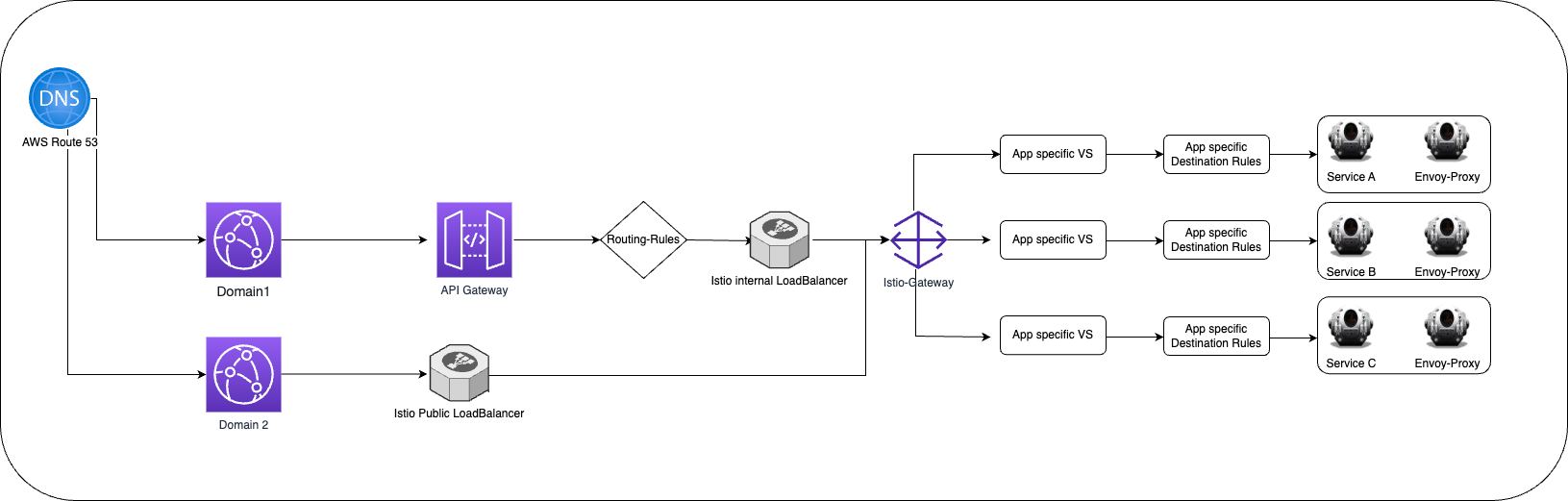

How did we implement Istio at Halodoc?

At Halodoc, we implemented Istio to simplify the way we handle microservice deployments. To ensure that the platform was secure and resilient. We also had to optimize the setup for our existing services. To achieve this, we used Istioctl to manage and configure the platform to match our requirements.

We used Istio’s default ingress and egress gateways to secure and route traffic. Istio’s service discovery feature allowed us to look up our services by name, making it easier to integrate with other services. We configured Istio for fine-grained traffic control and monitoring.

We implemented Istio, which not only helped in managing the micro-services but also in cost optimization. Let's talk about the two methods that are employed in the staging and production environments. We used the Istio for two types of deployments.

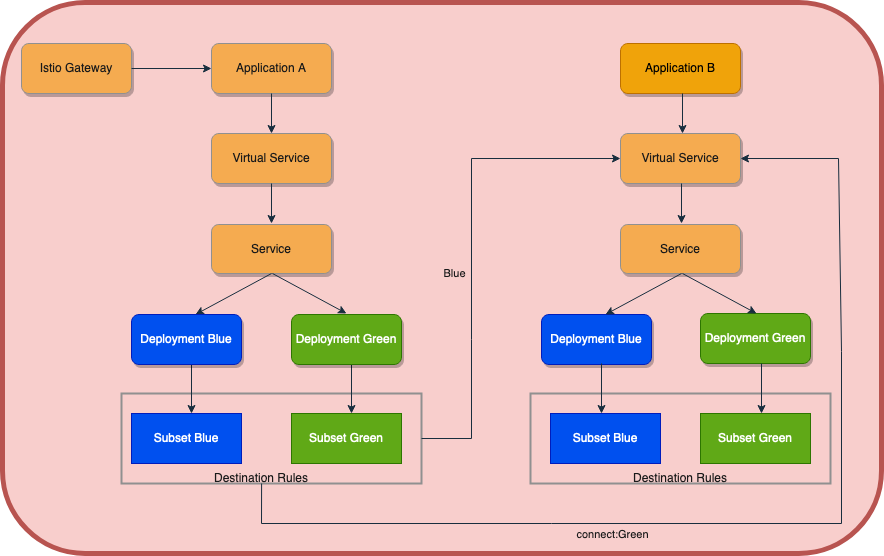

- Blue and Green we used isolated environments on the same platform to deploy two versions of a micro-service utilizing shared AWS resources, which allowed us to shutdown multiple lower environments and deliver the same functionality on a single platform.

- Canary Deployments makes a new feature available to a small set of users based on the percentage we decide to expose a new version to our customers.

Let's discuss approaches in detail

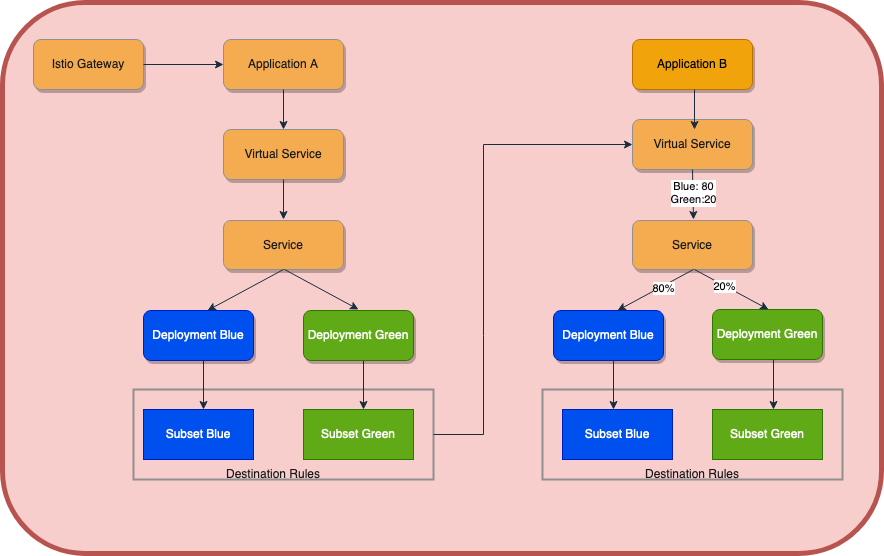

- Isolated environments(Blue and Green): In this approach, we have enhanced the capability of our CICD pipeline to deploy two versions of the same application with the help of Virtual service and Destination Rules. Let's have the architecture for the same and then virtual service configuration.

Virtual service configurations:

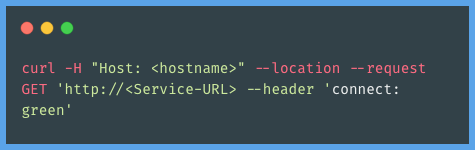

Two routes to the destination service have been specified in the virtual service mentioned above. The green route will be used when the service request includes a header and meets the criteria; otherwise, the blue default route will be used. Let's look at an example of a curl request.

By deploying many microservice applications in an existing environment in isolated mode, we can undertake performance testing and feature testing on a single platform with the aid of this strategy. Performance testing and other testing that may potentially knock down the entire service and disrupt other team engineers who depend on this microservice can be done now, before it is off-business, in the lower environment.

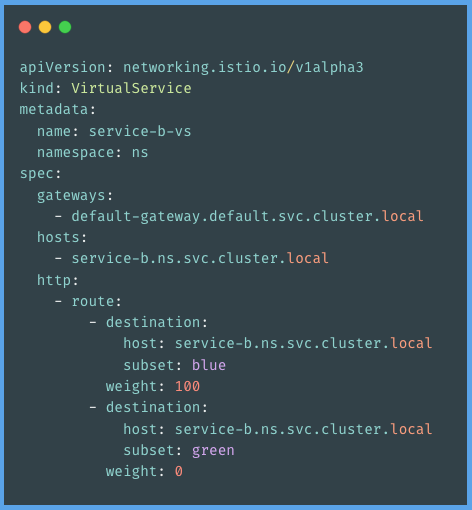

2. Canary Approach: In this approach, we have implemented weight-based routing in our strategy. By doing this, we expose only 20% of each new version to a small audience at each release, then after validation, we roll out the remaining 80%. We modified the HPA settings with the use of the helpers.tpl script to handle the expense of additional pods. This will launch various pods according to the weight assigned in the virtual service. By doing this, we may benefit from the many advantages of a blue/green, sustainable environment at a low cost. Using an understanding of the following architecture, let's explore more

Virtual service configurations:

In the configuration above, traffic is being routed according to the weight set in the virtual service configuration.

Proven Benefits

- Ability to run multiple versions of same service within the same cluster.

- Ability of rolling out a new version of an application to a small subset of users and ability to test application functionality, performance, and reliability in a production-like environment before releasing it to the wider audience.

- Faster rollback to a previous version of an application in the event of an issue or failure.

- Improved visibility of API calls and its flow.

Conclusion

In this blog, we understood how we can make use of Istio to achieve multiple problem statements.

- Blue Green deployments with the help of Istio.

- Canary deployment with the help of Istio.

- Traffic management.

- Cost-optimization by shutting the redundant lower environments.

In the upcoming blog, we would like to explore more on other features of Istio and have it implemented which might be the right fit for our infrastructure at Halodoc. Thanks for reading through! I hope this blog put some light, made a place in your research journey of learning/implementing istio, and added value to your time.

Bibliography

Join Us

Scalability, reliability and maintainability are the three pillars that govern what we build at Halodoc Tech. We are actively looking for backend engineers/architects and if solving hard problems with challenging requirements is your forte, please reach out to us with your resumé at careers.india@halodoc.com.

About Halodoc

Halodoc is the number 1 all around Healthcare application in Indonesia. Our mission is to simplify and bring quality healthcare across Indonesia, from Sabang to Merauke.

We connect 20,000+ doctors with patients in need through our Tele-consultation service. We partner with 1500+ pharmacies in 50 cities to bring medicine to your doorstep. We've also partnered with Indonesia's largest lab provider to provide lab home services, and to top it off we have recently launched a premium appointment service that partners with 500+ hospitals that allows patients to book a doctor appointment inside our application.

We are extremely fortunate to be trusted by our investors, such as the Bill & Melinda Gates Foundation, Singtel, UOB Ventures, Allianz, Gojek and many more. We recently closed our Series B round and In total have raised USD$100million for our mission.

Our team work tirelessly to make sure that we create the best healthcare solution personalised for all of our patient's needs, and are continuously on a path to simplify healthcare for Indonesia