Full Stack SDET: The Craft of Harmonising Development and Testing

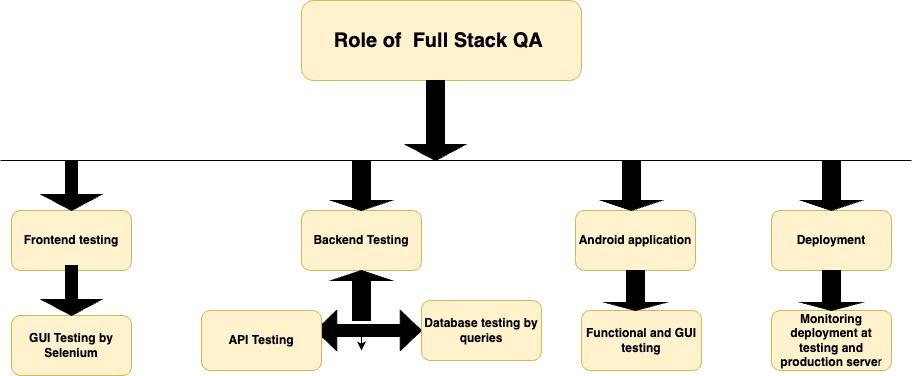

Who is a full stack tester & what does a full stack tester do?

A full stack tester is an individual tasked with evaluating an entire system, spanning from the backend to the frontend. This comprehensive approach entails assessing the complete application rather than focusing solely on isolated components. Full stack testers delve into not only the user interface but also the backend and database facets. Their responsibilities encompass test design, data compilation, and result analysis. They maintain close collaboration with software developers, jointly ensuring the thorough testing of their products.

Testers employ a variety of tools for application evaluation, including web browsers, integrated development environments (IDEs), and text editors. This diverse toolkit guarantees that users have a precise and authentic experience while interacting with the application.

Full stack testing involves a holistic approach to assessing all quality dimensions of an application at every layer, culminating in the delivery of high-quality software.

Comparable to full-stack developers, full-stack QA professionals go beyond traditional testing boundaries. They expand testing horizons and possess versatile capabilities that contribute to the efficient release of fast-paced, high-quality products.

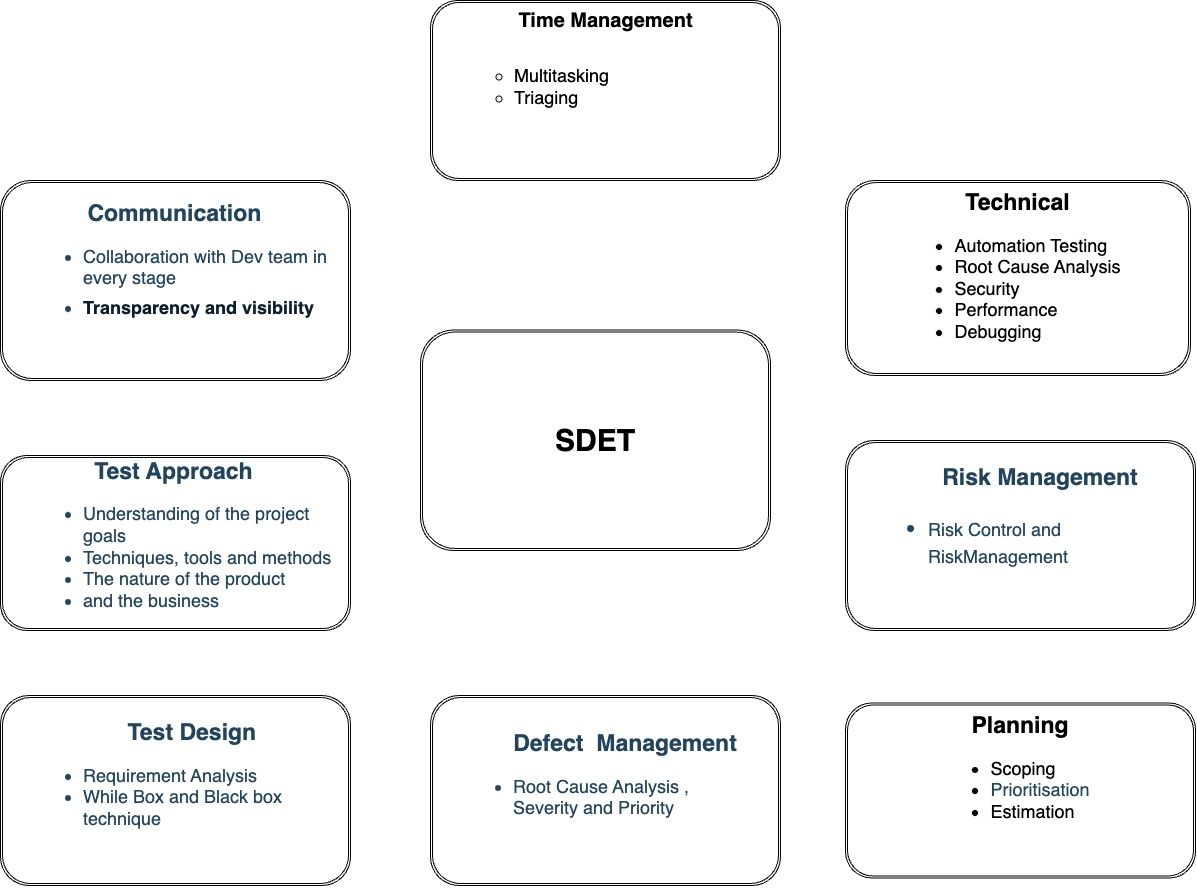

Essential Development Skills for Full Stack SDETs

- Programming language

- Automation Frameworks

- API Testing

- GIT

- Performance Testing

- Jenkins

- Database

Programming language

The programming skills can help testers to communicate better with developers. As automation tests are closely connected with code, the knowledge of the programming language that the team works with is one of the critical requirements for a tester. Having strong programming skills helps them create unique edge scenarios and write effective automated tests that validate the functionality and quality of various software components.

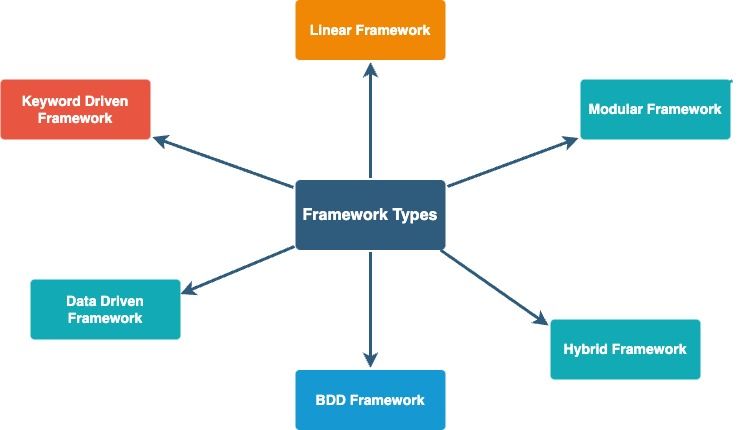

Automation Frameworks

Automation frameworks help SDET to automate most of the daily tasks which is time-consuming or manually difficult to perform. A framework is a set of automation guidelines that help in minimum usage and less maintenance of code, improving re-usability, etc.

Advantages of a Test Automation Framework

- Reusability of code

- Maximum coverage

- Recovery scenario

- Low-cost maintenance

- Minimal manual intervention

- Easy Reporting

Key points to think before select a Test Automation Framework

- Scripts and Data Separation

- Maximize Re-Usability

- Ability to automate scenarios which is based on multiple logics

- Tool Extensibility

- Re-run for Failure

- Parallel Execution

API Testing

Why API Testing ?

To narrow down the probabilities of defect detection at a later stage

- Ease to Shift Left

- Lower Maintenance to Do

- Faster Bug Fixes

- Better Test Coverage

- Time Effective

GIT

Git provides testers with a powerful set of tools and practices that streamline collaboration, improve code quality, and enhance the overall testing process. It aligns testing practices with modern development methodologies and enables testers to contribute more effectively to the software development life cycle.

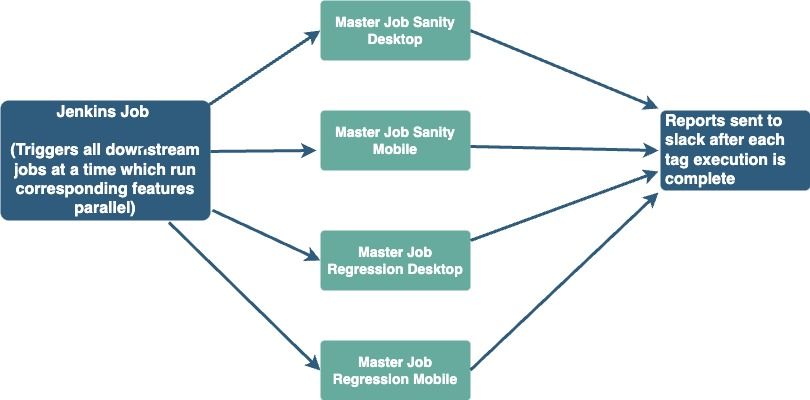

Jenkins

Why is Jenkins important for your test automation?

- First, it provides numerous plugins for an array of frameworks such as Selenium, Cucumber, Appium, WebdriverIO, etc. These plugins can be integrated into CI pipelines to run automated tests for every build.

- Most of these plugins will summarise your test results and display them neatly as you will see later in the article.

- Jenkins provides various options for reporting your test results such as Allure and JUnit.

Parallel Runs for Web Automation Tests using Jenkins jobs

- Today Sequential runs(Sanity takes around 1hr 45mins and Regression 2hr 05mins approx), the goal is to reduce the time taken by at least 50% or less)

- Parallel runs will be done based on the tags.

A master job to trigger (Sanity/Regression) to trigger the sub jobs which will be distributed among feature tags.

- At a time 8 tests can be run in parallel on a dedicated Jenkins slave with 8 nodes.

- As and when the nodes are available the parallel executions will continue until all the queued jobs are complete.

Database

Database Testing involves the validation of data stored within a database by examining the elements that govern the data and the associated functionalities. Typically, this process encompasses tasks such as assessing data accuracy, evaluating data integrity, measuring performance, and scrutinizing various database procedures, triggers, and functions.

Testing at Halodoc

Agile Automation Testing

We at Halodoc follow ShiftLeft testing. The journey of automation testing commences during the development phase:When the UI design is nearing completion, collaboration with the QAs intensifies.

Developers furnish the static HTML pages, enabling QAs to construct the fundamental skeleton of the Page Object model, an essential component of the Cucumber framework.

This foundational structure is then enriched using the building blocks within Cucumber, represented by the feature files that outline the test's flow.

As backend APIs become integrated on the development front, these flows should necessitate only minor adjustments, given that requirements are locked in before the sprint.

Optimal Approaches to Accelerating and Ensuring Robustness of Automated UI Tests

For Web Automation and Mobile App automation testing, we follow the below approach:

- Parallel Runs for Web Automation Tests.

- Choose to set up Jenkins nodes to handle parallel runs via testNG.

- Segregate dependent tests to be run sequentially, while other non dependents can be run in parallel.

- Incubate the flaky tests until fixed.

- Use API calls to avoid unnecessary UI actions.

For instance:

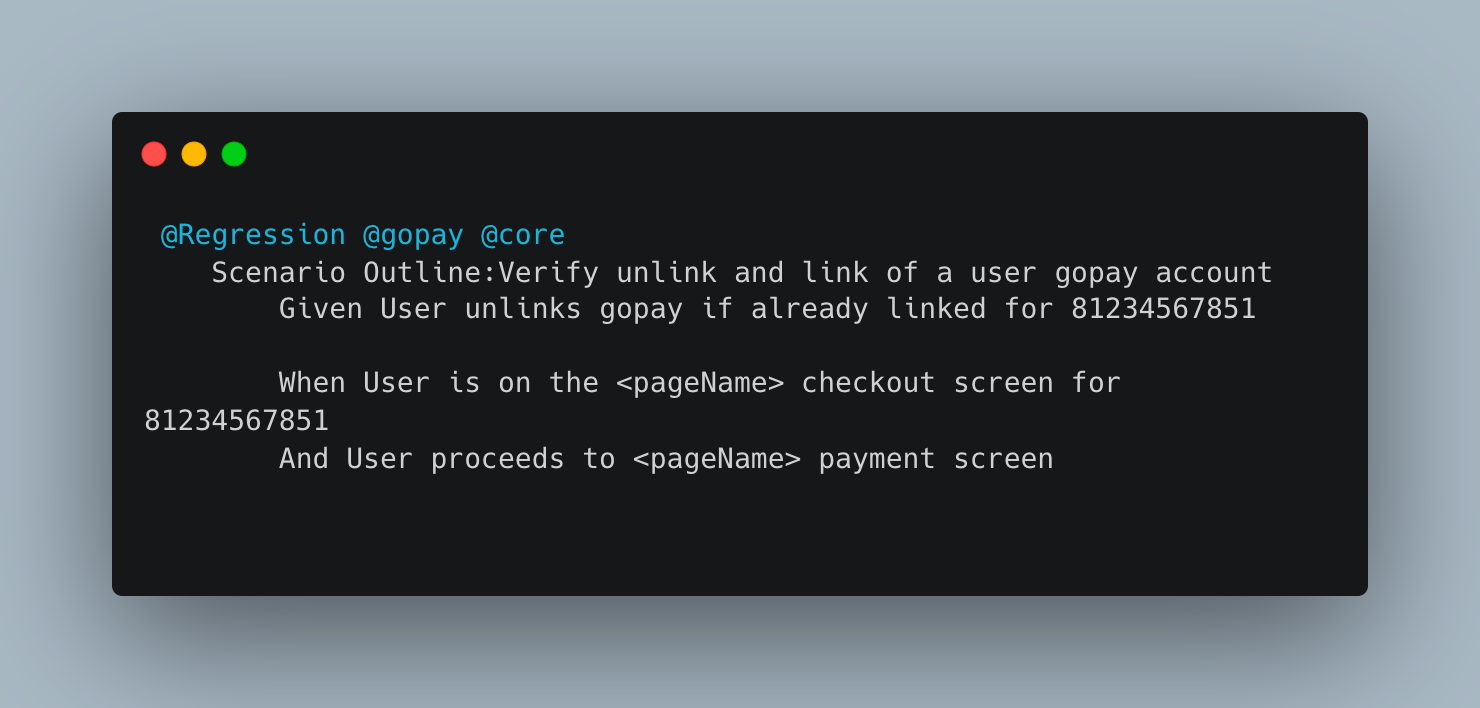

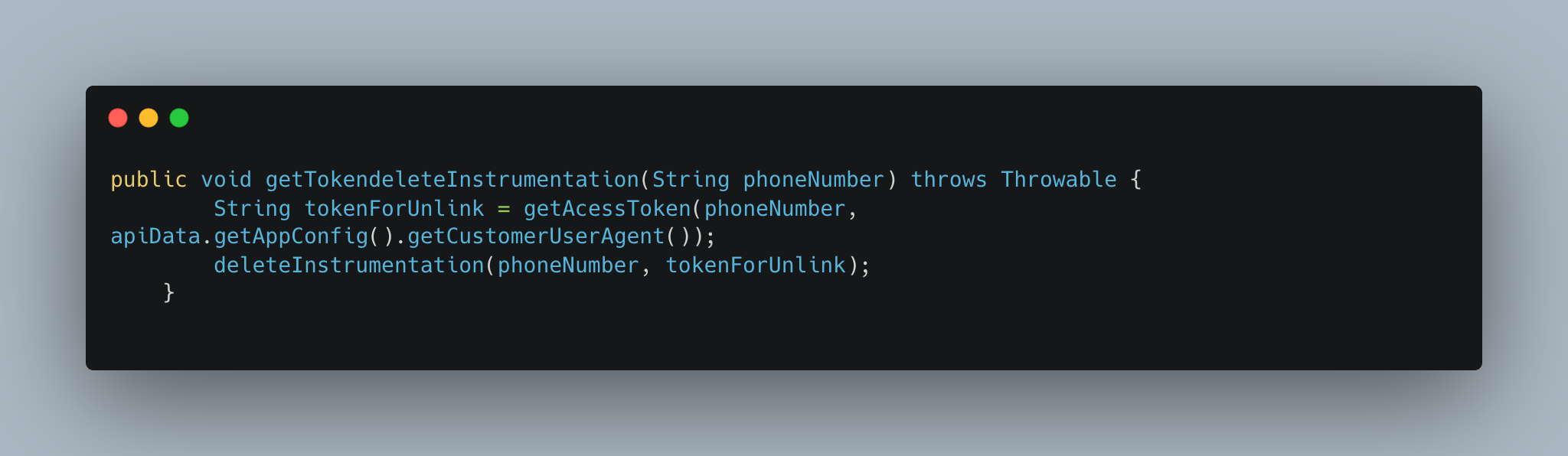

Use case : If a test expects to unlink and then link a gopay account

- Since the idea is to test for successful linking of gopay account

Here the prerequisite is to Unlink the account if already present and proceed the testing

- - This could be achieved via API, rather than UI top up flow.

- Proceed the UI flow only to check for successful linking of Gopay account

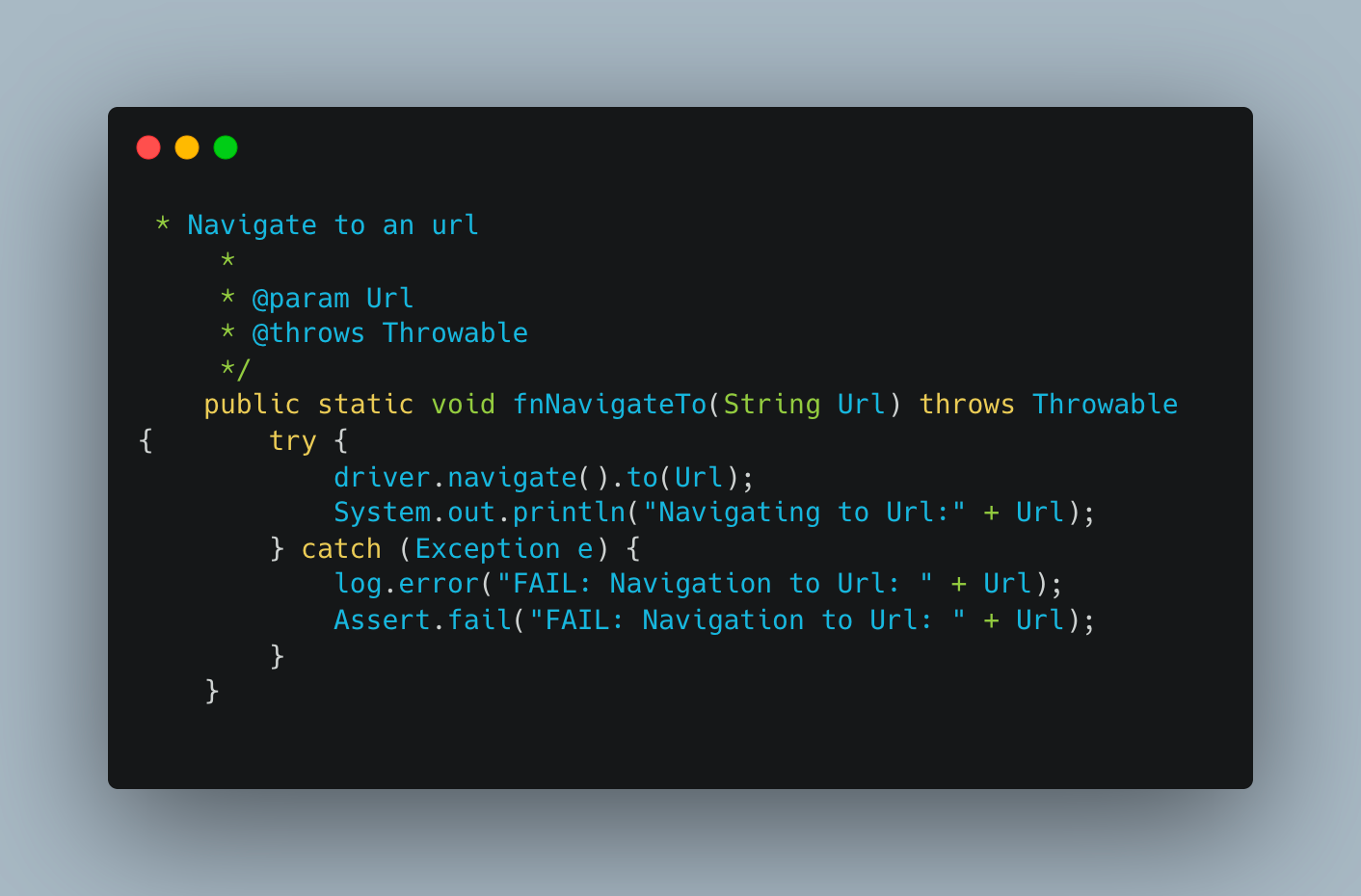

6. Use Navigational shortcuts.

7.Fail Fast mechanism.

For instance: If the user can’t sign in, then there is no need to test other features that an authenticated user can do because those will eventually fail as well.

Here is the reference for optimising Mobile App Automation test

Enhancing Web Application Performance Tracking via WebPageTest, InfluxDB, and Grafana

- Measuring the web page performance.

- Storing the Performance Metrics in Influx DB.

- Visualizing the data through Grafana.

Using the above setup, we've taken a significant stride toward enhancing the performance of our web pages. By consistently monitoring the metrics and perpetually refining our strategies, we are maintaining a high level of responsiveness at all times.

Here is the reference for a detailed explanation of Performance monitoring in web apps

Performance Testing using JMeter

Data-Driven API Selection

The journey begins with data. We meticulously combed through the NewRelic metrics to identify APIs that exhibited substantial usage patterns or prolonged response times.

Crafting JMeter Scripts for Precision

With our target APIs in sight, the next phase involved the creation of precise JMeter scripts. To accurately emulate real-world scenarios, we assembled these APIs within dedicated thread groups. The real magic came with the implementation of throughput timers. Depending on the nature of the microservices, we seamlessly integrated both the "Constant Throughput Timer" and the "Gradual Throughput Shaping Timer.”

Harnessing Influx and Grafana for Real-Time Insights

The data generated from our JMeter scripts needed a home, and that's where Influx stepped in as a reliable backend listener. Creating dedicated databases for each microservice allowed us to house the results effectively. Connecting this wealth of data to Grafana, we engineered a data source that seamlessly integrated with our newly minted databases.

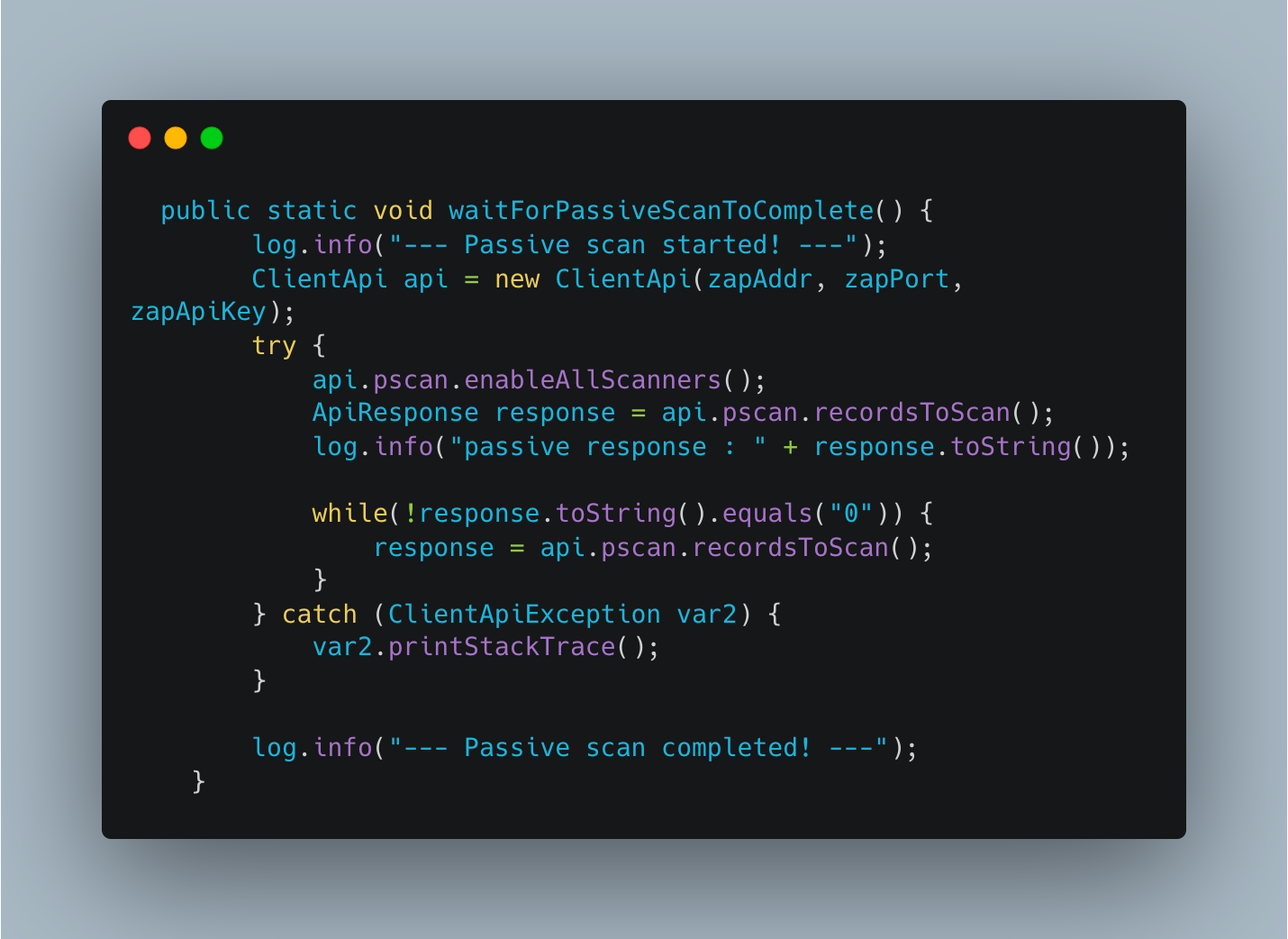

Security testing with OwaspZAP

We've established an extensive suite of sanity and regression tests using Selenium and Cucumber, seamlessly orchestrated through Jenkins.

This robust framework plays a pivotal role in evaluating our application during its nascent development stages, guiding us to make timely, informed decisions. In a dynamic development landscape such as ours, the antidote to expediting application testing lies in test automation.

This not only accelerates our testing processes but also guarantees the enforcement of indispensable security protocols within our product.To fortify our security endeavors, we harnessed the prowess of OWASP ZAP security automation tests, seamlessly fusing them with our existing Selenium scripts.

The outcome was twofold: we achieved the seamless automation of our application's security assessments, all the while upholding the exacting quality standards that define our product line.

Kafka Integration Test Automation

We at Halodoc have Integrated Kafka with our test automation to check how Kafka collaborates with external systems. This encompasses scrutinizing both "message production" and the subsequent "message consumption and processing." The automation of this intricate procedure can be achieved through the utilization of Dockerized Kafka. This entails the creation of a Kafka broker and a Zookeeper instance, coupled with the deployment of a WireMock server.

Testing Guidelines on What to Automate

DO'S:

- Automate repetitive tasks

- Automate things users will do every day

- Automate things that will save you time

- Automate things that will alert you when something is wrong

DONT'S:

- Do not automate test cases that you know will be flaky

- Not recommended to automate bugs that will never be seen again.

While coding skills and knowledge of automation are skills that help testers become more efficient and effective, critically thinking, logical analysis, problem-solving solving and story telling are skills that are at the core of any testing role.

Test reporting: how to communicate and visualize

Using TestNG Listeners, we aggregated results of various automation suites in real time into InfluxDB. Grafana Dashboard was used (with necessary plugins) to display the details graphically . With large number of test automation projects and suites, having a consolidated report helped in tracking the progress of test automation along with the test results.

We added an ExecutionListener to our testng.xml file along with additional parameters for tracking. ExecutionListener will listen to the test execution and will capture different parameters such as,

- Test suite name

- Test name

- Test class

- Test method

- Total tests run

- Test status: PASS/FAIL/SKIP

These additional parameters (defined in testng.xml) can be used to track progress of automation in agile STLC. An example testng.xml file is given below. The parameters defined are also stored (influxDB) and captured in reports (Grafana).

In future, we can make TestNG listen to more metrics and capture the same to create more sophisticated reports.

Conclusion

In a nutshell, full-stack testing is your ticket to outstanding software. It means thoroughly examining every aspect of your application at every stage to catch and fix problems early.

Here's the golden rule: shift your testing left. That means starting testing right at the beginning of your development process. It's like laying a solid foundation for your house before you start building. This approach saves you time, money, and headaches in the long run, and it promotes better teamwork within your development crew.

In today's fast-paced tech world, full-stack testing isn't just a good idea; it's a necessity. It helps you create software that truly impresses users, builds trust, and keeps you ahead of the competition. As technology keeps evolving, full-stack testing will remain your go-to method for crafting top-tier software efficiently and comprehensively.

About Halodoc

Halodoc is the number 1 Healthcare application in Indonesia. Our mission is to simplify and bring quality healthcare across Indonesia, from Sabang to Merauke. We connect 20,000+ doctors with patients in need through our Tele-consultation service. We partner with 3500+ pharmacies in 100+ cities to bring medicine to your doorstep. We've also partnered with Indonesia's largest lab provider to provide lab home services, and to top it off we have recently launched a premium appointment service that partners with 500+ hospitals that allow patients to book a doctor appointment inside our application. We are extremely fortunate to be trusted by our investors, such as the Bill & Melinda Gates Foundation, Singtel, UOB Ventures, Allianz, GoJek, Astra, Temasek, and many more. We recently closed our Series D round and in total have raised around USD$100+ million for our mission. Our team works tirelessly to make sure that we create the best healthcare solution personalized for all of our patient's needs, and are continuously on a path to simplify healthcare for Indonesia.