Semantic Search in Healthcare: Inside Halodoc’s RAG Retrieval Architecture

Our Challenge at Halodoc

Search has always been a foundational capability at Halodoc, powering key user experiences such as medicine discovery, doctor search, and consultations. Our keyword-based search system, built on TF-IDF, continues to perform reliably and remains the primary search mechanism across the platform.

However, as we expanded into AI-driven systems such as our prescription assistance platform and Healthcare Q&A assistant, we encountered a new class of challenges.

These systems rely on Retrieval-Augmented Generation (RAG), where the quality, safety, and accuracy of responses depend heavily on retrieving the right contextual medical information. In this setting, traditional keyword-based search, while effective for user-facing discovery, falls short in capturing semantic meaning.

For example:

- “medicine for fever” may not retrieve “paracetamol tablets”

- “heart doctor” may not surface “cardiologist”

While such gaps are acceptable in traditional search, they become critical in AI workflows, where missing relevant context directly impacts response quality and safety.

This highlighted the need for semantic retrieval, enabling systems to understand intent and meaning rather than relying solely on keyword matching.

Limitations of Keyword Search for RAG

Halodoc’s keyword search already supports synonym handling, typo tolerance, and multilingual processing, and performs well for user-facing use cases. However, RAG introduces fundamentally different retrieval requirements.

In RAG systems, the goal is high semantic recall — retrieving conceptually relevant information even when the wording differs.

We observed several key challenges:

- Conversational queries often use everyday language that does not align with structured medical terminology

- The same medical concept can be expressed differently by patients and clinical sources

- Knowledge is distributed across heterogeneous datasets

- Missing context leads to weaker or incomplete AI responses

These challenges made it clear that keyword search alone is insufficient for AI-driven retrieval.

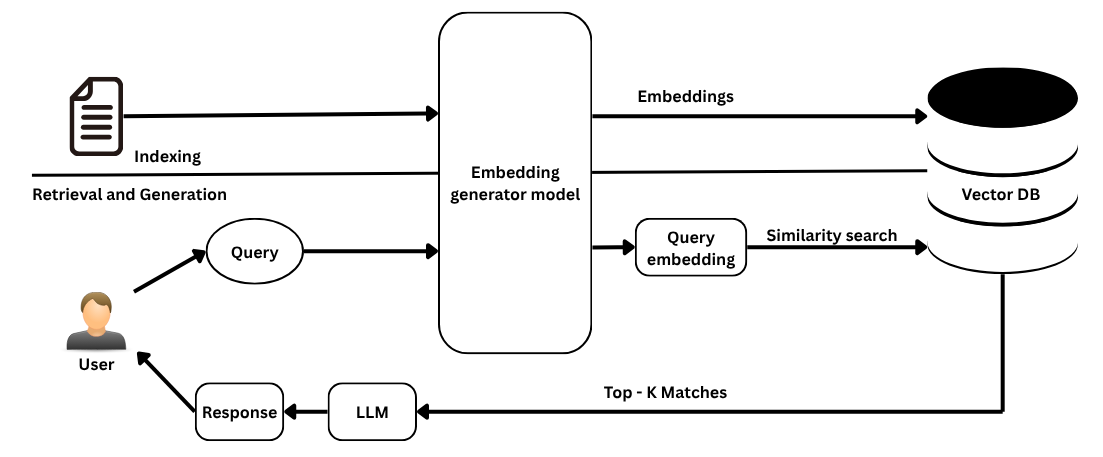

Vector Search as a Semantic Retrieval Layer for RAG

Instead of replacing our existing search system, we introduced vector search as a dedicated semantic retrieval layer for AI workflows.

Vector search works by converting content into numerical representations (embeddings) that capture semantic meaning. This enables retrieval based on similarity rather than exact keyword matches.

For example:

A query like “medicine for fever and headache” can retrieve

“Paracetamol 500 mg tablets, fever relief medication”,

even without exact keyword overlap.

This approach significantly improves how our AI systems retrieve relevant medical context, leading to more accurate and reliable responses, while continuing to preserve keyword-based search for user-facing features.

Implementation Using OpenSearch

We implemented vector search using OpenSearch, leveraging its native support for k-NN vector fields and HNSW-based approximate nearest neighbor (ANN) search.

🔹 Step 1: Embedding Generation

We used a Hugging Face sentence-transformers model to generate 768-dimensional embeddings for:

- Medicine names

- Product metadata

- Synonyms

These embeddings capture semantic relationships between medical terms.

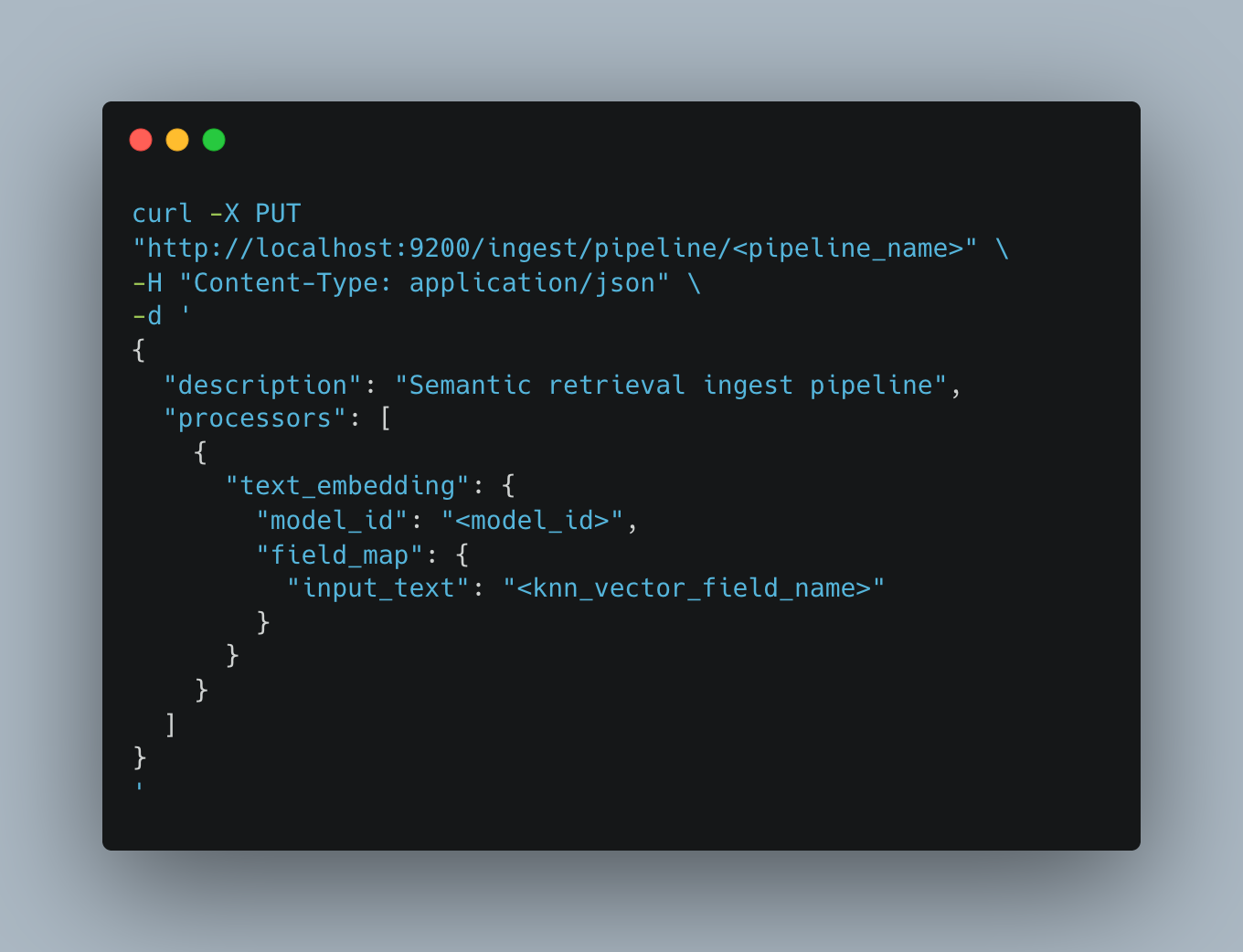

🔹 Step 2: Embedding pipeline

We created an OpenSearch ingest pipeline to automatically generate embeddings during data ingestion, ensuring that every indexed document is immediately available for semantic retrieval without additional processing.

🔹 Step 3: Vector index & Storing Vectors

We configured a vector-enabled index with a dedicated embedding field. Each document stores both the original text and its vector representation, enabling efficient similarity search.

🔹 Step 4: Semantic Retrieval in RAG

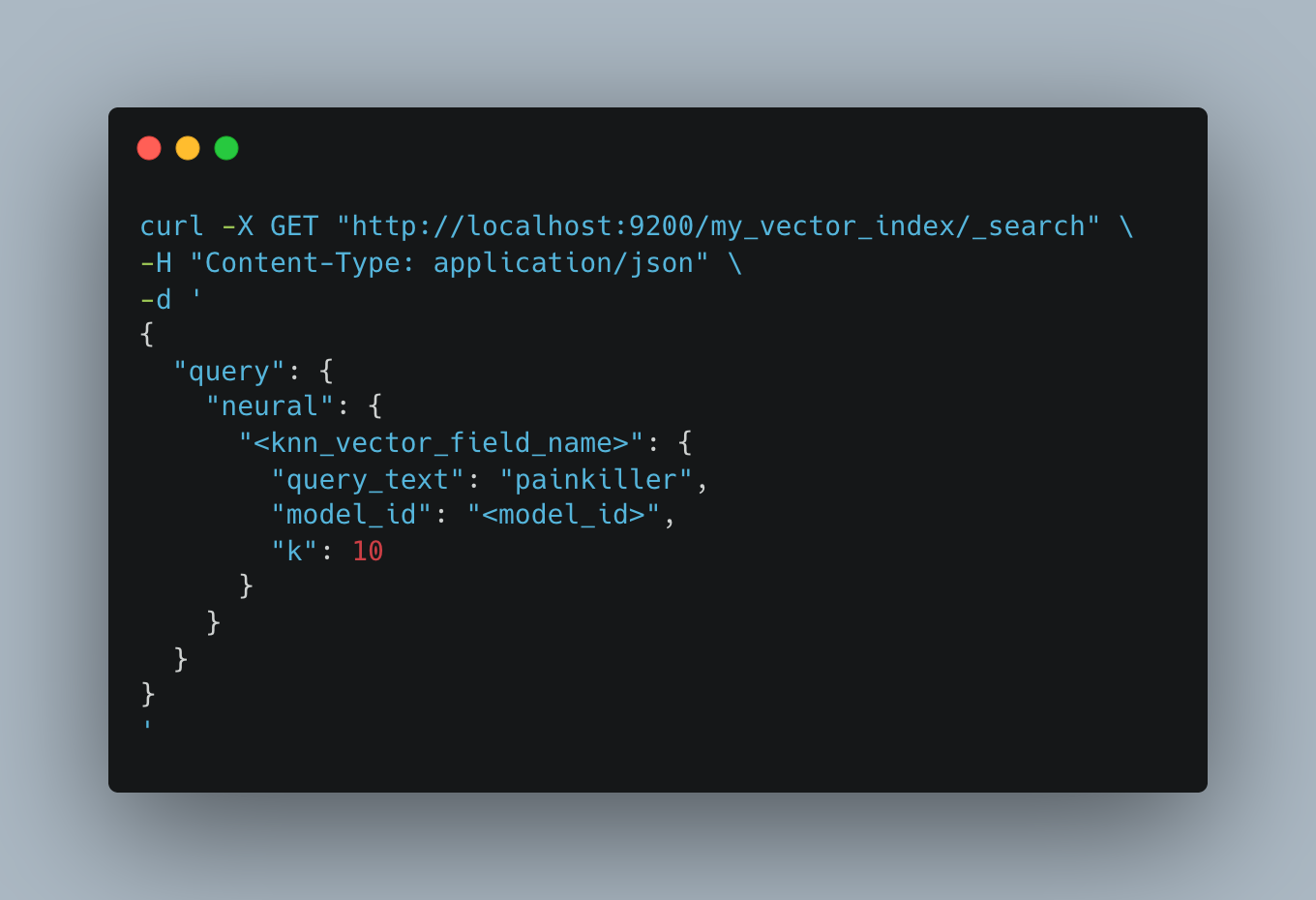

OpenSearch supports AI-powered search capabilities, including semantic, hybrid, multimodal, and conversational search with Retrieval-Augmented Generation (RAG). These approaches enable automatic embedding generation from query inputs.

At query time:

- The user query is converted into an embedding

- A vector similarity search retrieves relevant documents

- The retrieved context is passed to the LLM for grounded response generation

This ensures responses are accurate, contextual, and medically relevant.

To perform AI-powered search, use the neural query type. Specify the query_text, the embedding model ID configured in the ingest pipeline, and the number of results (k) to return.

Choosing Embedding Dimensions

Choosing the right embedding size is critical:

- Lower dimensions (e.g., 384) are faster but less expressive

- Higher dimensions (e.g., 1536) capture richer semantics but come with higher computational and storage costs

We selected 768 dimensions, balancing semantic depth, performance, and cost.

Why HNSW?

We chose HNSW (Hierarchical Navigable Small World) for similarity search because it provides:

- High recall

- Fast retrieval (millisecond-level latency)

- Scalability for large datasets

Although it requires more memory, it is well-suited for real-time AI systems.

Multilingual support

Semantic search is not language-specific and supports both Bahasa and English, allowing users to interact naturally in their preferred language while receiving accurate, context-aware responses.

Lessons Learned

- Embeddings are model-dependent; changing models requires reindexing

- Hybrid retrieval (keyword + vector) consistently improves results

- Offline embedding generation scales better for large datasets

- Retrieval quality directly impacts hallucination rates

These insights shaped our approach to the next phase of our search infrastructure — expanding vector search from a standalone retrieval feature into a broader capability supporting multiple AI-driven systems.

Vector Search Beyond Retrieval — Enabling AI Systems

Vector search has evolved into a foundational capability powering:

- RAG for prescription assistance and healthcare Q&A

- Context retrieval for conversational AI

- Semantic clustering for recommendations

Rather than replacing keyword search, it complements it — forming a hybrid retrieval approach that improves AI grounding and relevance.

This semantic capability has also enabled more intuitive, natural language–driven user experiences. One such experience is Concierge Search, built on top of this semantic retrieval foundation.

Concierge Search — AI Assistant Layer

We are introducing Concierge Search, an AI assistant layer built on semantic capabilities, enabling it to understand user intent and deliver context-aware healthcare results beyond keyword matching.

The system follows a hybrid architecture:

- Structured data (doctors, products, orders) → retrieved via APIs

- Knowledge queries (FAQs) → handled through RAG with vector search

For FAQ queries:

- Semantic retrieval fetches relevant content

- The retrieved context is injected into the LLM

- The LLM generates a grounded response

Cost & Performance Considerations

To ensure scalability:

- We adopted offline embedding generation

- Leveraged GPU-backed ML nodes for efficiency

- Optimized indexing throughput and inference costs

This approach enabled us to maintain high-quality embeddings while scaling across large datasets.

Impact

The introduction of vector search and RAG pipelines led to:

- Improved answer quality in AI systems

- Reduced hallucination rates

- More reliable and medically grounded responses

These improvements were achieved without disrupting existing search infrastructure.

Impact metrics:

- Total prescriptions validated by the prescription assistance platform to date: ~21K

- Total bookings recorded from users who engaged with the Healthcare Q&A assistant: ~32K

Conclusion

Vector search did not replace Halodoc’s core search system; instead, it evolved into a semantic retrieval layer that enhances AI-powered experiences.

By integrating vector search into RAG pipelines, we improved the accuracy, reliability, and safety of healthcare AI systems while preserving the strengths of keyword-based search.

More importantly, this architecture provides a scalable foundation for future AI-driven healthcare innovations at Halodoc.

Join us

Scalability, reliability and maintainability are the three pillars that govern what we build at Halodoc Tech. We are actively looking for engineers at all levels and if solving hard problems with challenging requirements is your forte, please reach out to us with your resumé at careers.india@halodoc.com.

About Halodoc

Halodoc is the number one all-around healthcare application in Indonesia. Our mission is to simplify and deliver quality healthcare across Indonesia, from Sabang to Merauke.

Since 2016, Halodoc has been improving health literacy in Indonesia by providing user-friendly healthcare communication, education, and information (KIE). In parallel, our ecosystem has expanded to offer a range of services that facilitate convenient access to healthcare, starting with Homecare by Halodoc as a preventive care feature that allows users to conduct health tests privately and securely from the comfort of their homes; My Insurance, which allows users to access the benefits of cashless outpatient services in a more seamless way; Chat with Doctor, which allows users to consult with over 20,000 licensed physicians via chat, video or voice call; and Health Store features that allow users to purchase medicines, supplements and various health products from our network of over 4,900 trusted partner pharmacies. To deliver holistic health solutions in a fully digital way, Halodoc offers Digital Clinic services including Haloskin, a trusted dermatology care platform guided by experienced dermatologists.

We are proud to be trusted by global and regional investors, including the Bill & Melinda Gates Foundation, Singtel, UOB Ventures, Allianz, GoJek, Astra, Temasek, and many more. With over USD 100 million raised to date, including our recent Series D, our team is committed to building the best personalized healthcare solutions — and we remain steadfast in our journey to simplify healthcare for all Indonesians.