Implementing HTTP/2 Client with OkHttp

What is HTTP/2?

HTTP/2 Definition

HTTP/2 is the second major version of the application protocol in the form of HTTP. HTTP/2 does not modify the application semantics of HTTP in any way. All the core concepts, such as HTTP methods, status codes, URIs, and header fields, remain in place. HTTP/2 modifies how the data is formatted (framed) and transported between the client and server, both of which manage the entire process and hides all the complexity from our applications within the new framing layer. To achieve the performance goals set by the HTTP Working Group, HTTP/2 introduces a new binary framing layer that is not backward compatible with previous HTTP/1.x servers and clients—hence the major protocol version increment to HTTP/2. In May 2015, the HTTP/2 implementation specification was officially standardized in response to Google's HTTP-compatible SPDY protocol (as stated in https://blog.chromium.org/2015/02/hello-http2-goodbye-spdy.html).

HTTP/2 Purpose

The primary goals for HTTP/2 are to reduce latency by enabling complete request and response multiplexing, minimize protocol overhead via efficient compression of HTTP header fields, and add support for request prioritization and server push.

HTTP/2 Flow

In HTTP/1.1, a request/response is formatted in header and body, wrapped in a single binary frame, while In HTTP/2, it's packed as binary frames. For every single request/response communication, these frames are shipped as a stream. A stream is a bi-directional sequence of frames that share a common identifier (a stream id). Streams enable the interleaving of frames from multiple streams together, which allows for proper multiplexed communication over a single connection.

What problem were we trying to solve?

HTTP/1.1 was limited to processing only one outstanding request per TCP connection, forcing clients to use multiple TCP connections to process multiple requests simultaneously. We wanted to reduce the cost, especially in load time and request time, with the connection multiplexing feature. Having a fully multiplexed-on single TCP connection will reduce memory, network, and CPU load for both the client and the server. In short, by migrating to HTTP/2, we wanted to minimize the latency for each HTTP request and enable parallel requests instead of waiting for each HTTP request's completion before processing the next one.

What is OkHttp, and why did we choose it?

OkHttp (https://square.github.io/okhttp/) is an efficient HTTP & HTTP/2 client for Android and Java applications. It's been widely used in Android and has good documentation also.

Before deciding on OkHttp, we have done some POC (proof of concept) for eligible candidates in the HTTP/2 client library. We have evaluated Apache, Jetty, and OkHttp.

The first library evaluated was Apache's library, and giving unsatisfying results as follows:

- The setup requires some understanding of Apache's class used for initialization, while Jetty and OkHttp are pretty straightforward.

- For feature testing, e.g., connection multiplexing, Apache's library gave a lot of ConnectionClosedException, as shown in the screenshot below:

Thus it was eliminated as a candidate shortly. Post this elimination, we had to decide between Jetty and OkHttp. After doing another POC, we were able to list down the advantages of using OkHttp over Jetty, resulting in the decision to choose OkHttp for the client library. The advantages were listed as follows:

- OkHttp has better handling for the connection pooling feature, as it has a more straightforward connection configuration setup and management.

- In Halodoc, we use both Brotli and GZip compression, and OkHttp supports Deflate and Brotli compression alongside the standard GZip compression while Jetty only supports GZip compression.

- OkHttp supports caching mechanism (caches HTTP and HTTPS responses to the filesystem as a reusable resource, thus saving time and bandwidth). We can enable the cache if we need it going forward.

Migration

HTTP/2 Server

We only need to enable HTTP/2 support for the server-side, including adding Jetty ALPN support for HTTP/2.

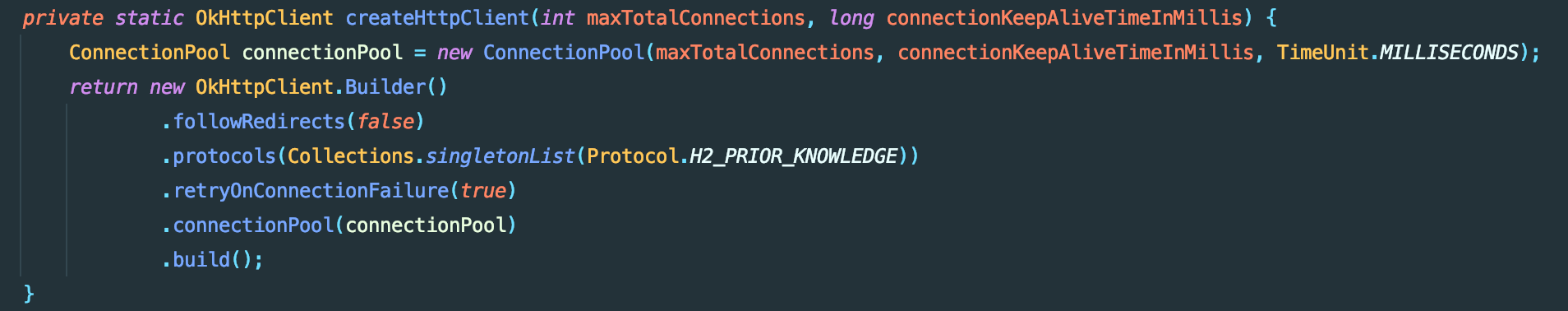

OkHttp Client

The OkHttp client is also very straightforward, and we need to define the connection and choose the protocol. Since we are going to migrate to HTTP/2 fully, we choose the H2_PRIOR_KNOWLEDGE protocol. H2_PRIOR_KNOWLEDGE means that we are enforcing HTTP/2 calls to the server, as it will not be fallback to HTTP/1.1 if the server's not supporting HTTP/2, instead it will throw an exception.

Migration Result

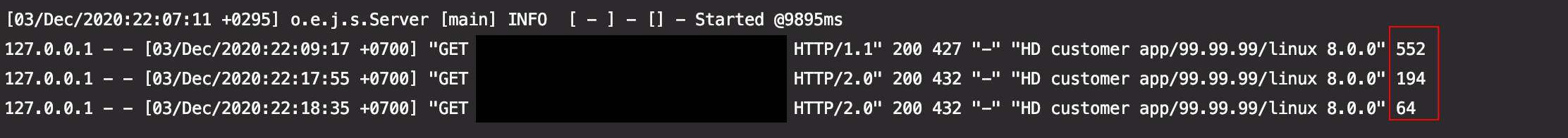

After we completed the migration process, we immediately testing the fully multiplexed on single TCP connection feature. With the same API and payload hit for testing, HTTP/2 responded with a smaller request time (shown in the boxed line in red in the testing result screenshot below). The difference is also significant, thus giving us more confidence and confirmation for doing the right thing for us.

Notable Challenges

We faced several problems during the migration, and one of the most notable ones is the limitations for sending requests to AWS's load balancer. As stated in the documentation, by default, AWS's load balancer will convert the HTTP/2 request to HTTP/1.1 to the instance. We can change it, but it will require complete migration because the load balancer will block incoming HTTP/1.1 requests. We managed to overcome this because we are using Kubernetes clusters. In Kubernetes clusters, there is no AWS load balancer used because each pod will connect to another pod in another cluster from the clusters' network.

Summary

Implementing HTTP/2 is a straightforward process to do. The client's library selection process is also necessary, including research and proof of concept, because each has its pros and cons. Thus we need to choose the one that's solving the main problem we are trying to solve with the migration. But the most crucial point is to utilize the protocol and only migrate if it has any benefits for you, not just for the sake of going into the latest technology. As for us, HTTP/2 is beneficial because we wanted to utilize the connection multiplexing alongside streams, as proved in the testing result after completing the migration. It will decrease the load time required, especially when it's called from the front-end side.

Join us

Scalability, reliability, and maintainability are the three pillars that govern what we build at Halodoc Tech. We are actively looking for engineers at all levels and if solving complex problems with challenging requirements is your forte, please reach out to us with your resumé at careers.india@halodoc.com.

About Halodoc

Halodoc is the number 1 all-around Healthcare application in Indonesia. Our mission is to simplify and bring quality healthcare across Indonesia, from Sabang to Merauke.

We connect 20,000+ doctors with patients in need through our Tele-consultation service. We partner with 2500+ pharmacies in 100+ cities to bring medicine to your doorstep. We've also partnered with Indonesia's largest lab provider to provide lab-home services. To top it off, we have recently launched a premium appointment service that partners with 500+ hospitals that allow patients to book a doctor's appointment inside our application.

We are incredibly fortunate to be trusted by our investors, such as the Bill & Melinda Gates Foundation, Singtel, UOB Ventures, Allianz, Gojek, and many more. We recently closed our Series B round and, In total, have raised USD 100million for our mission.

Our team works tirelessly to ensure that we create the best healthcare solution personalized for all of our patient's needs and are continuously on a path to simplify healthcare for Indonesia.