Building Secure PII Encryption at Scale

Our Challenge

At Halodoc, we handle sensitive personally identifiable information (PII)—bank account numbers, payment details, and patient data—across multiple microservices and databases. As our platform scaled to serve millions of customers, we faced a critical security gap:

- Cross-service exposure risk — PII is distributed across multiple services within our VPC. While network-level controls reduce external threat surface, a data store compromise in any one service could expose sensitive fields across users — bridging this internal gap was a primary driver for this initiative

- Regulatory non-compliance — Absence of encryption violates data protection laws

- Inconsistent practices — Encryption approaches varied across teams and services, making audits impossible

- Centralised key management gap — No standardised way to rotate keys or track access

- Architectural constraints — Systems needed to work across heterogeneous storage patterns (dedicated columns, EAV models, JSON blobs)

We needed more than a crypto library. We needed a complete system: standardised key management, transparent application-level encryption, zero-downtime migration, and multi-language support.

Solution Overview: Config-Driven Application-Level Encryption

Rather than relying on a single encryption service, we built a distributed encryption model where:

- Keys are config-driven — Managed in a centralized config, versioned per database

- Encryption happens locally — Each service encrypts/decrypts within the application layer

- Vault acts as secure storage — Not as the crypto engine, reducing external service dependencies

- Self-describing envelopes — Ciphertext carries version metadata for backward-compatible key rotation

- Transparent to business logic — ORM hooks handle encryption automatically

Architecture: How It Works

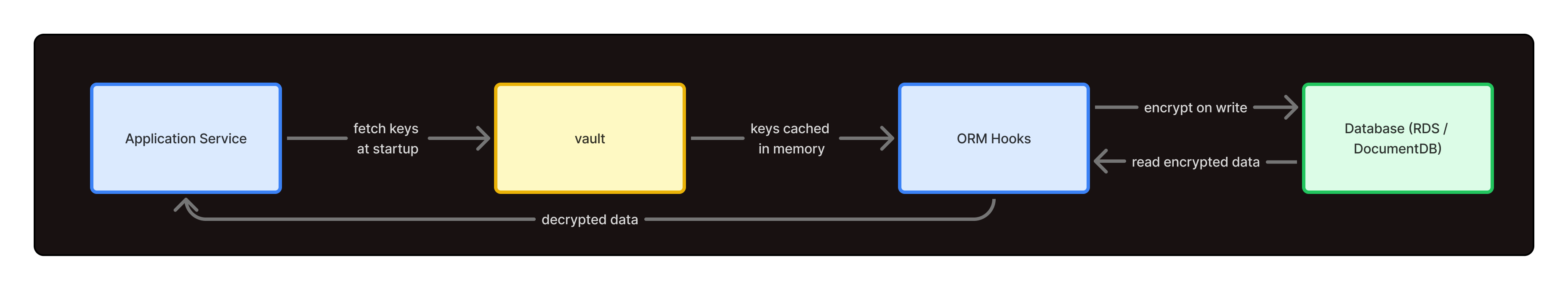

High-Level Flow

Key Components

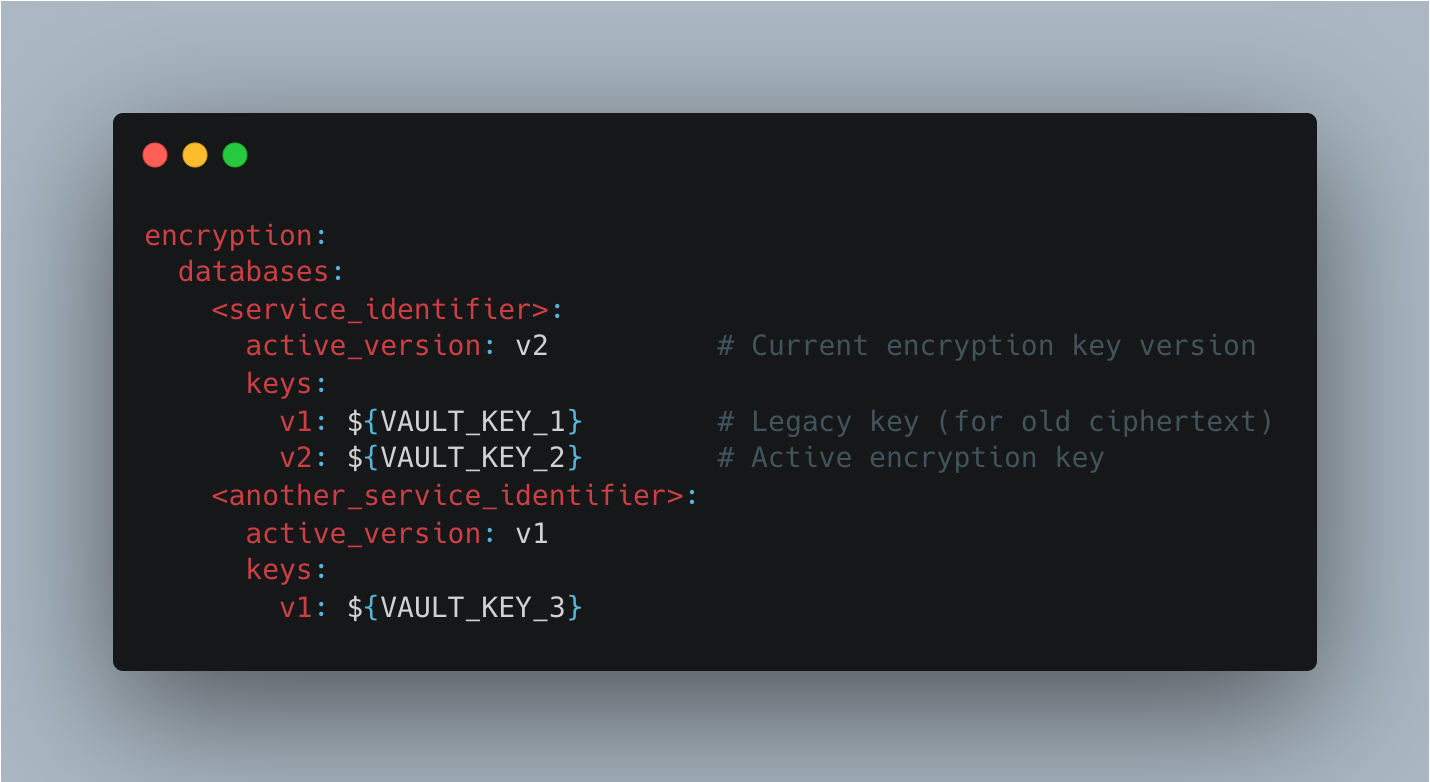

1. Encryption Configuration

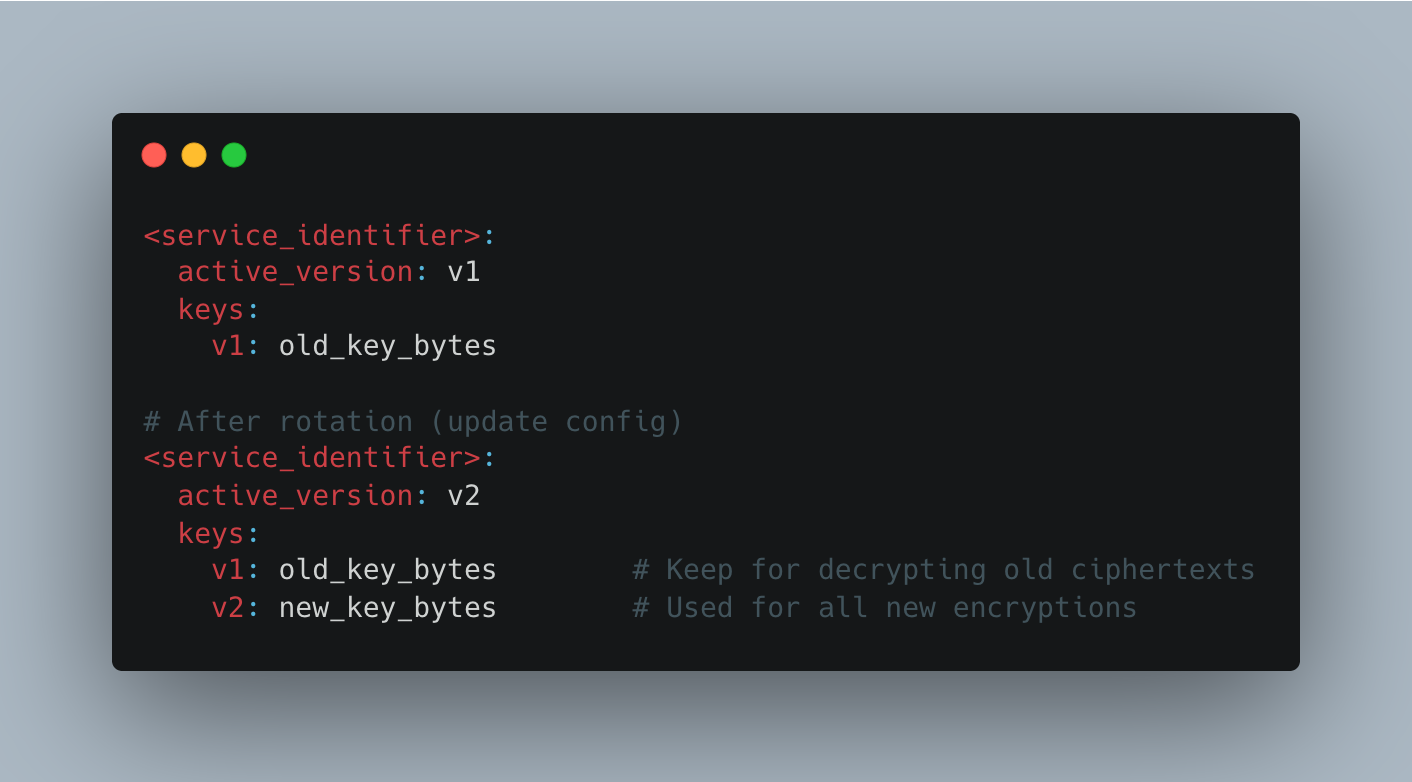

Each database has a versioned key configuration:

Why this design?

- Centralised versioning enables consistent encryption across all services

- Service loads only its own keys at startup—no runtime Vault calls

- Version metadata embedded in cipher text allows decryption of old data after rotation

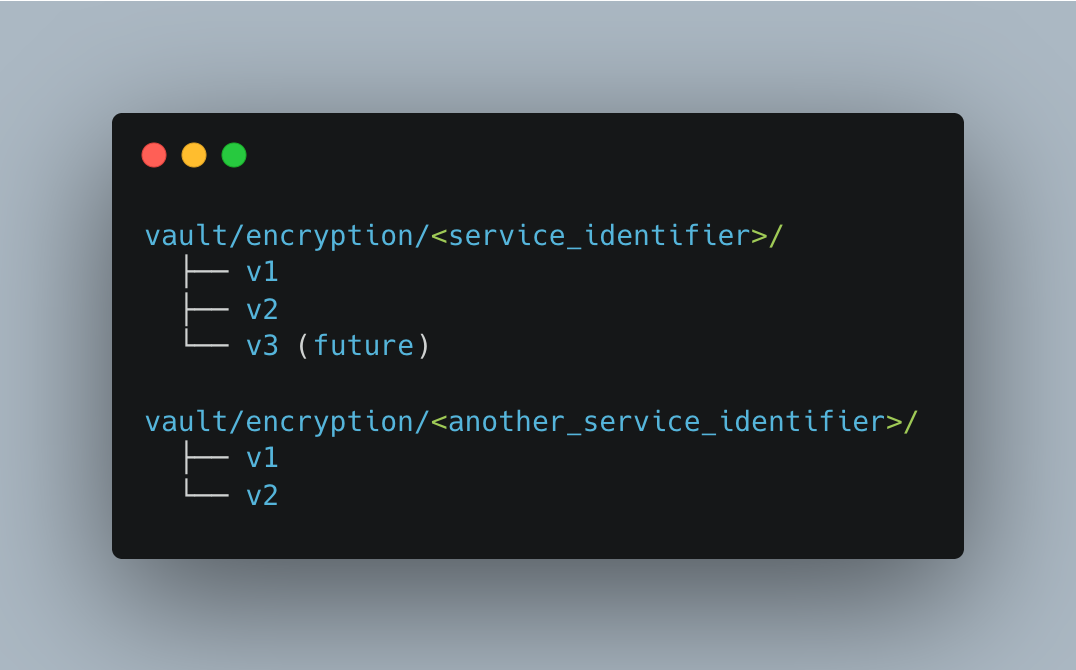

2. Vault Setup

Vault stores AES-256 keys under a logical structure:

Key caching strategy:

- Service fetches all keys for its databases once at startup

- Keys stored in memory as

(db_name, version) → SecretKeymappings - No Vault calls during encryption/decryption → Lower latency, higher availability

3. Self-Describing Envelope Format

Every encrypted value follows a simple versioned envelope:

{ENCRYPTION_PREFIX}:{ACTIVE_VERSION}:<base64_payload>Where payload contains:

- 12-byte nonce (random, unique per encryption). At Halodoc's current data volumes, the probability of a random 96-bit nonce collision under a single key is astronomically low (roughly 1 in 2⁴⁸ after ~4 billion encryptions), but teams handling significantly higher write volumes should consider a counter-based or deterministic nonce scheme to eliminate the risk entirely.

- Cipher text (same size as plaintext)

- 16-byte GCM authentication tag (detects tampering)

Why this format?

- Version is human-readable – {ENCRYPTION_PREFIX}:{ACTIVE_VERSION} tells us which key was used

- Single BASE64 string fits in VARCHAR columns

- Tag provides integrity checking—corrupted data fails decryption with clear errors

Why Config-Based Over Vault Transit Engine?

We evaluated Vault's Transit Engines but chose config-based management for one simple reason: operational independence.

The Trade-Off

| Aspect | Vault Transit Engine | Config-Based |

|---|---|---|

| Runtime Calls | Every encrypt/decrypt hits Vault API | Keys cached at startup, encrypt locally |

| Latency | Network roundtrip (milliseconds) | In-memory operation (microseconds) |

| Outage Impact | Vault down = encryption down | Vault only needed for deployments |

| Key Storage | Wrapped DEK with every field (200-300 bytes) | Only nonce + ciphertext + tag |

| Switching Providers | Full data rewrap required | Update config, that's it |

| Version Management | Vault-specific metadata (vault:v1:...) | Self-describing envelope ({ENCRYPTION_PREFIX}:v2:...) |

| Debugging | Depends on Vault logs | Version in plaintext, human-readable |

The Bottom Line

Vault Transit Engine makes sense when you want Vault to own the entire encryption lifecycle. Config-based makes sense when you want encryption to be invisible—keys cached, encryption local, Vault just a secure vault.

For Halodoc's scale and multi-service architecture, we needed the latter: encryption that doesn't slow down production, doesn't add a critical dependency, and doesn't require rewrapping data to migrate providers.

Implementation Across Storage Patterns

Given the constraints of a live migration across an existing production system, we encountered three distinct storage patterns in use. Each required a different adaptation strategy.

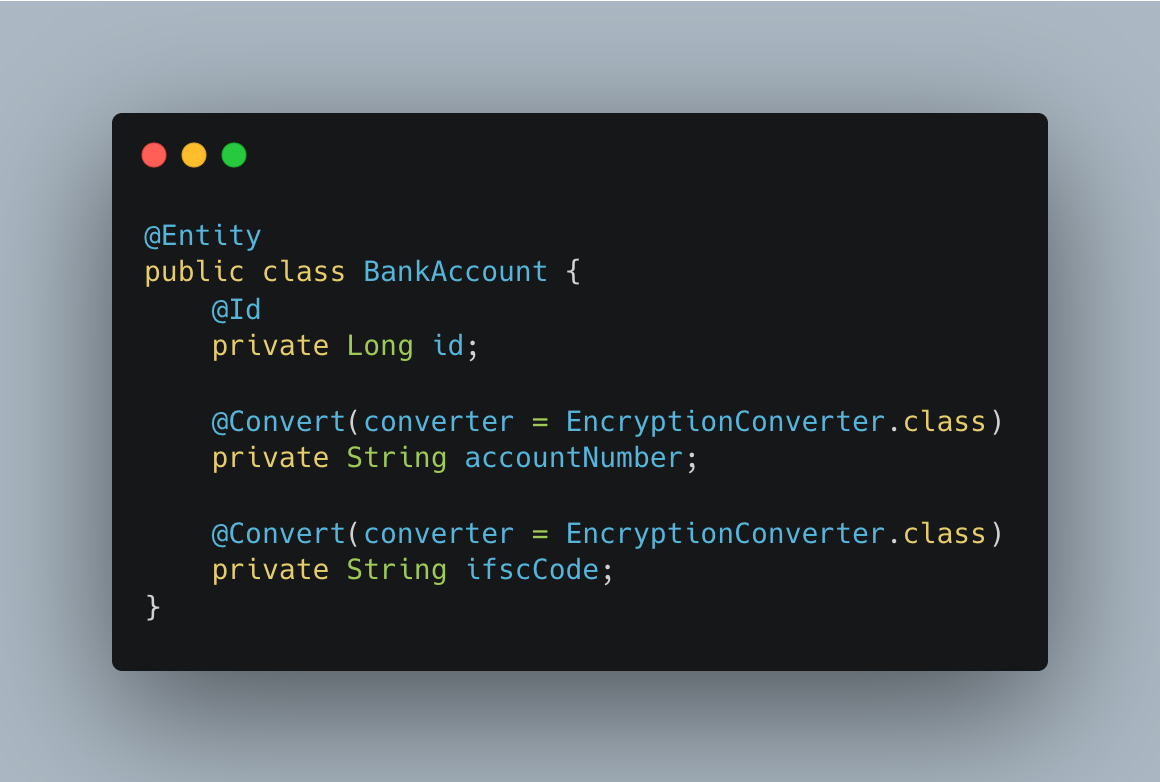

Pattern 1: Dedicated Column (Most Common)

Example: BankAccount.accountNumber

Java Implementation:

The AttributeConverter intercepts data:

- convertToDatabaseColumn() — Encrypts plaintext before INSERT/UPDATE

- convertToEntityAttribute() — Decrypts after SELECT

No service code changes needed.

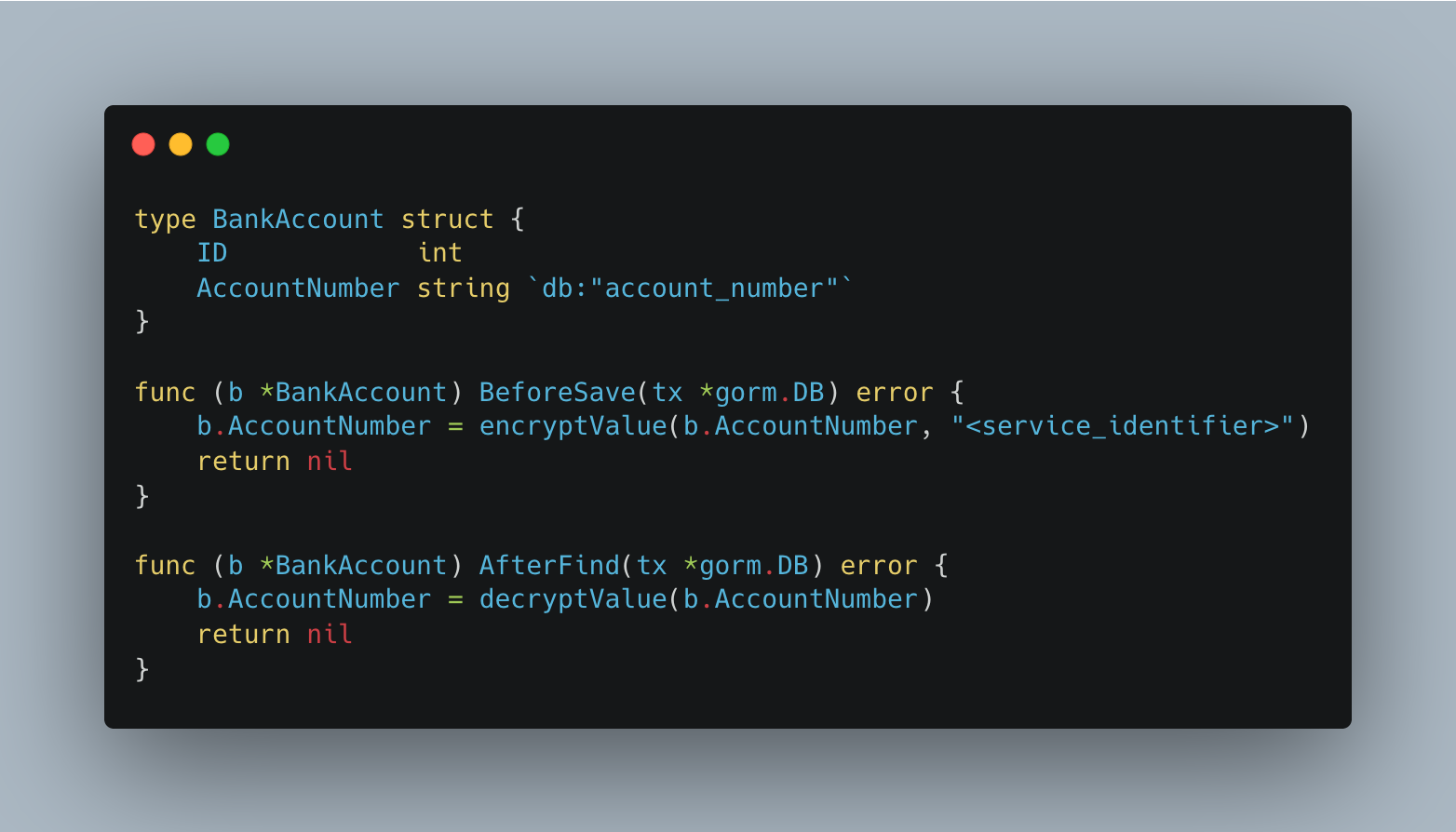

Go Implementation:

Why this pattern first?

- Simplest to implement and audit

- Single encrypted field per column

- No schema changes beyond column type updates (VARCHAR(255))

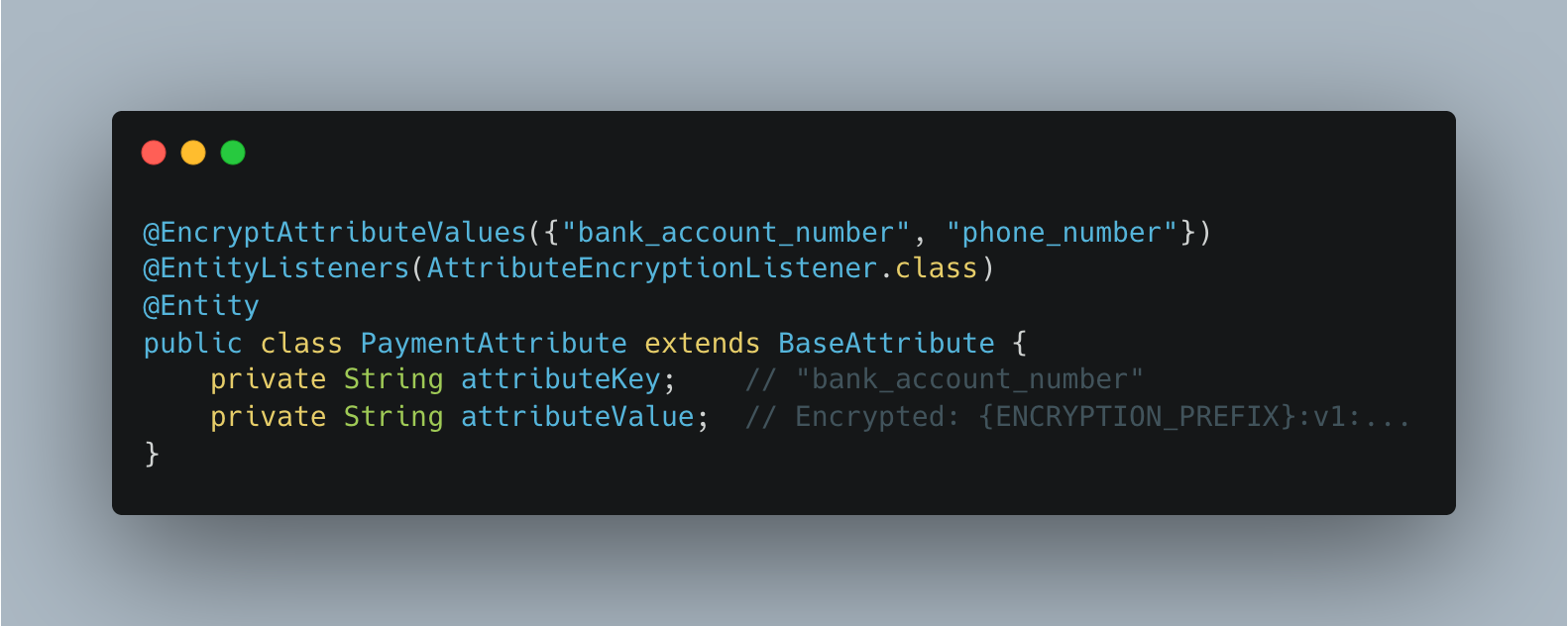

Pattern 2: EAV (Entity-Attribute-Value)

Example: PaymentAttribute where attribute_key = "bank_account_number"

The challenge: Encrypt only some attributes, not all.

Java Implementation:

The listener (triggered on @PrePersist, @PreUpdate, @PostLoad) checks the attributeKey and encrypts/decrypts only declared sensitive keys.

Go Implementation:

Key insight: Encryption logic is declarative. Adding a new sensitive attribute requires only a config update.

Pattern 3: JSON Blob — Why We Didn't Encrypt In-Place

Selective in-place JSON field encryption is technically possible, but introduces significant complexity: you need field-path awareness in the encryptor, the JSON structure must be stable enough to target specific keys reliably, and partial decryption on read adds overhead with little benefit over extraction. For these reasons, we chose not to build a JSON-native encryption path. Instead:

For stable, structured JSON :

- Extract PII fields into dedicated columns

- Apply the Dedicated Column Pattern

For dynamic, log-like JSON :

- Store masked placeholders (e.g.,

"account_number": "****...1234") - Never store plaintext PII in JSON blobs

Masking trades encryption strength for operational simplicity in unstructured log data — a deliberate tradeoff, not a gap.

This avoids the complexity of selective JSON field encryption and aligns with compliance best practices.

Key Rotation: Simple & Safe

Rotation is purely configuration-driven:

How it works:

- Generate new AES-256 key

- Store in Vault under

vault/encryption/<service_identifier> - Update config: set

active_version: <active_version> - Deploy updated config

- All new encryptions use latest version and old encrypted ones will still have the older version tags.

No data rewrapping needed. Existing ciphertexts remain untouched.

A compromised key puts all data encrypted under it at risk. At Halodoc, we account for this by maintaining an audit log of key lifetimes and a documented runbook for bulk re-encryption — ensuring that if a key is retired for cause, the response is immediate and traceable.

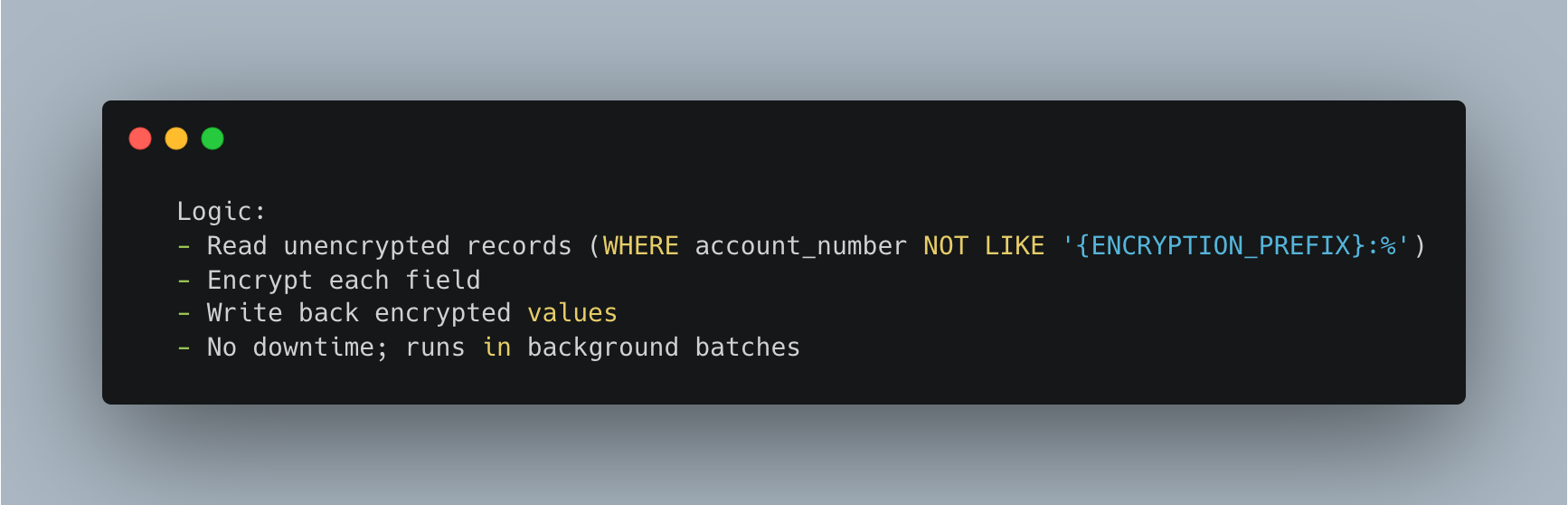

Zero-Downtime Migration

We split the rollout into two phases to ensure no service ever encounters data it cannot read.

Phase 1 — Decryption rollout (backward-compatible reads)

Deploy encryption-aware code with writes still in un-encrypted format. All services can now handle both formats, no data changes - no risk.

Phase 2 — Encryption rollout (write enablement)

Once all services are confirmed decryption-capable, enable the write path. New writes produce encrypted envelopes; existing un-encrypted rows remain readable via Phase 1 logic. Background migration jobs then sweep remaining un-encrypted rows in batches — no table locks - no downtime.

With both phases live, run service-owned migration jobs in the background:

"MIGRATE endpoint is secured behind internal service authentication and is not exposed externally. Batch jobs are idempotent — already-encrypted rows (detected via the envelope prefix) are skipped on re-run. If a batch fails mid-way, it can be safely re-triggered without double-encrypting data"

Archival data in DocumentDB follows the same pattern as well.

Performance & Storage Impact

Latency: Negligible

To validate the overhead introduced by the encryption layer, we ran a controlled test measuring per-operation read/write impact. The results: ~51 ms median latency and under 2% overhead — effectively negligible in practice.

Why so small?

- AES-256-GCM is hardware-accelerated on modern CPUs

- No external calls (Vault cached at startup)

- Encryption happens in microseconds

- Note: This test was designed to measure encryption overhead directionally, not to simulate production-scale load. It confirms that AES-256-GCM adds negligible per-request cost; full throughput benchmarking under peak production traffic is tracked separately.

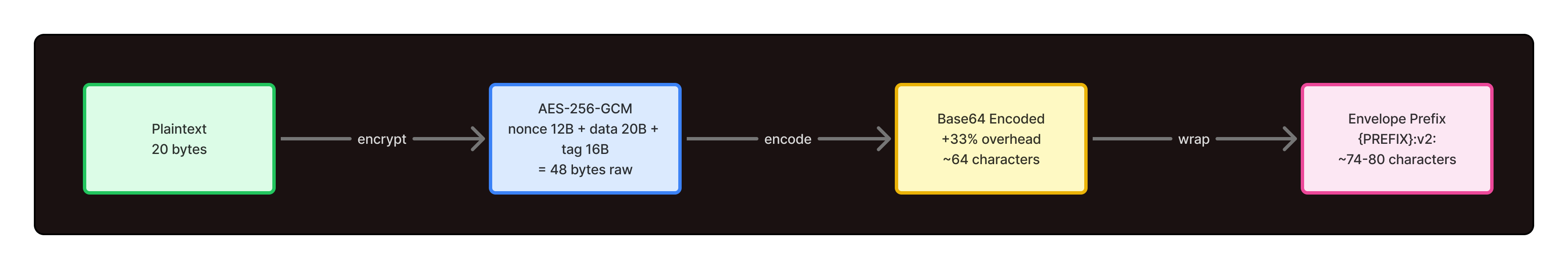

Storage: ~3.6x–3.8x Growth

A 20-character account number expands to approximately 74–80 characters after encryption and envelope encoding:

For large datasets:

- Increase VARCHAR from (100) to (400) for safety

- DocumentDB has no column-size limits

- Disk cost minimal; encryption benefits justify overhead

Conclusion

Encryption doesn't require complexity. By embedding version metadata in cipher texts, caching keys at startup, and treating encryption as a data-layer concern, we built a system that is:

- Secure — AES-256-GCM with authenticated encryption

- Scalable — Linear performance impact, simple migration path

- Operational — Zero-downtime deployments, config-driven rotation

- Portable — Works across Java, Go, and multiple storage patterns

The result: PII encryption that feels transparent to application logic while protecting millions of users' sensitive data and meeting regulatory requirements.

This encryption foundation has also enabled Halodoc to scale our healthcare services—from Chat with Doctor to Health Store—with the confidence that user financial and personal data remains protected.

Join us

Scalability, reliability and maintainability are the three pillars that govern what we build at Halodoc Tech. We are actively looking for engineers at all levels and if solving hard problems with challenging requirements is your forte, please reach out to us with your resumé at careers.india@halodoc.com

About Halodoc

Halodoc is the number one all-around healthcare application in Indonesia. Our mission is to simplify and deliver quality healthcare across Indonesia, from Sabang to Merauke.

Since 2016, Halodoc has been improving health literacy in Indonesia by providing user-friendly healthcare communication, education, and information (KIE). In parallel, our ecosystem has expanded to offer a range of services that facilitate convenient access to healthcare, starting with Homecare by Halodoc as a preventive care feature that allows users to conduct health tests privately and securely from the comfort of their homes; My Insurance, which allows users to access the benefits of cashless outpatient services in a more seamless way; Chat with Doctor, which allows users to consult with over 20,000 licensed physicians via chat, video or voice call; and Health Store features that allow users to purchase medicines, supplements and various health products from our network of over 4,900 trusted partner pharmacies. To deliver holistic health solutions in a fully digital way, Halodoc offers Digital Clinic services including Haloskin, a trusted dermatology care platform guided by experienced dermatologists.

We are proud to be trusted by global and regional investors, including the Bill & Melinda Gates Foundation, Singtel, UOB Ventures, Allianz, GoJek, Astra, Temasek, and many more. With over USD 100 million raised to date, including our recent Series D, our team is committed to building the best personalized healthcare solutions — and we remain steadfast in our journey to simplify healthcare for all Indonesians.