Common Load balancer and Cost Optimisation

At Halodoc, we utilize AWS Kubernetes to deploy each of our micro-services, and each service comes equipped with its own dedicated internal load balancer. We recognize the importance of efficiency and cost-effectiveness in the constantly evolving digital landscape. Our ongoing mission is to optimise our infrastructure costs while ensuring the high performance and availability of our services.

In this blog post, we're excited to share with you the approach we've taken by implementing common load balancers. We've found a way to enhance the performance and scalability of our services while simultaneously reducing costs. These load balancers act like traffic managers, making sure that our micro-services run smoothly, efficiently, and without interruptions.

Introduction:

Micro-services represent a shift from monolithic application design, breaking down complex software systems into smaller, independent services that can be developed, deployed, and maintained more efficiently. Yet, the very nature of micro-services introduces unique challenges, such as orchestrating service-to-service communication, maintaining a uniform level of performance, and managing the influx of traffic. This is where Application Load Balancers (ALB), and AWS Kubernetes come into play.

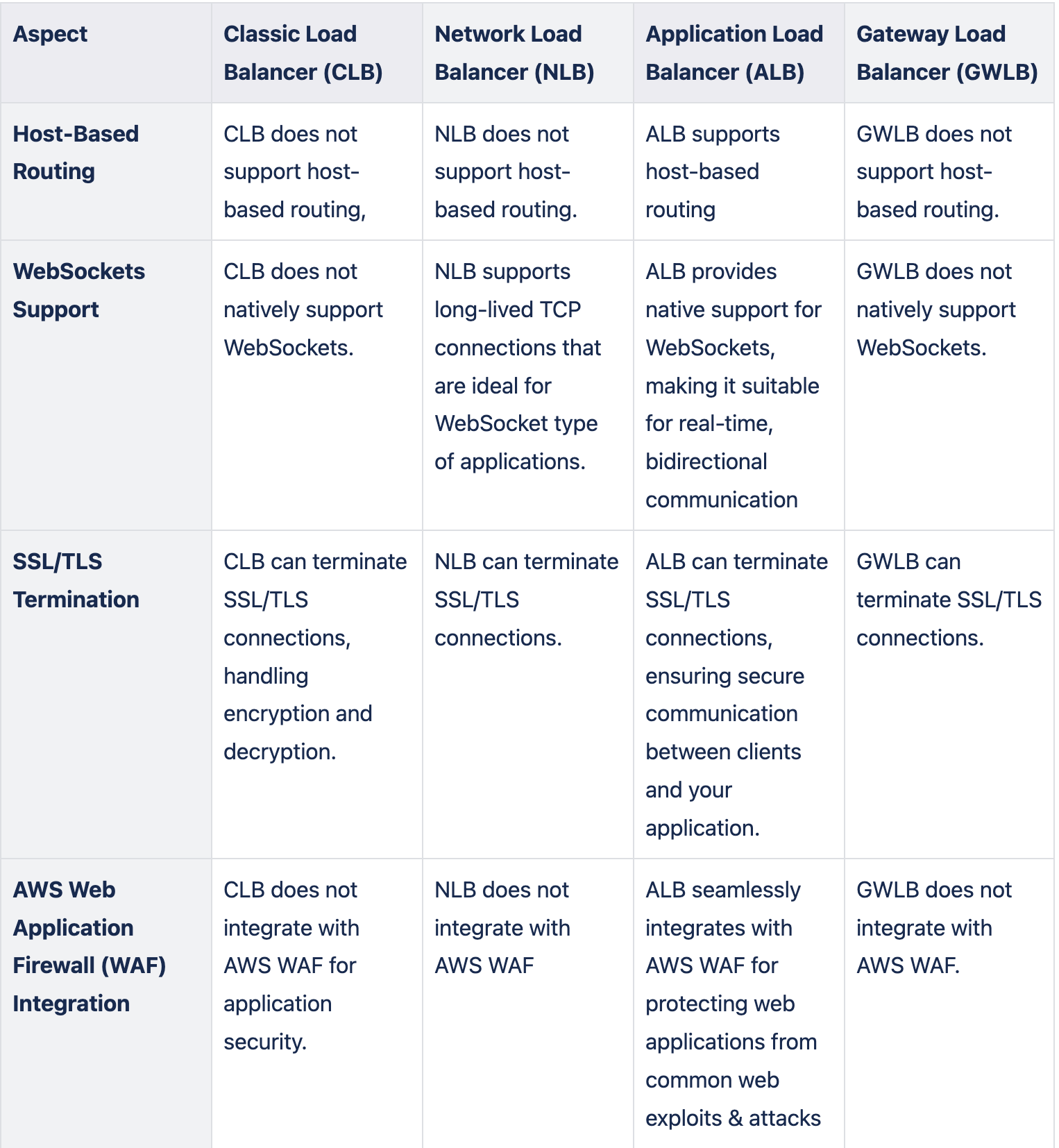

Elastic Load Balancing (ELB) is a service provided by Amazon Web Services (AWS) that automatically distributes incoming application traffic across multiple targets, such as Amazon EC2 instances, containers, and IP addresses. It ensures that requests from users and external services are intelligently distributed among the micro-services, guaranteeing even load distribution, failover support, and ultimately a consistent, high-quality user experience.

AWS Kubernetes serves as the foundation for deploying and managing micro-services, offering scalability and flexibility. The introduction of Application Load Balancers (ALB) enables the implementation of host-based routing for more precise control. Using this we can direct incoming traffic based on the host header or domain name in the HTTP request, providing the capability to route traffic to specific micro-services based on the originating host, offering increased flexibility and customization. We've created a robust and adaptable load balancing solution for our micro-services architecture by combining the capabilities of ALB with Kubernetes ingress resources and their corresponding annotations. ALB enhances ELB by allowing routing customization through host-based routing, HTTP header-based routing and integration with AWS WAF. This not only enhances load distribution and failover support but also guarantees a consistent, high-quality user experience.

What is host based routing in ALB?

Host-based routing is an advanced feature of ALBs that enables the routing of incoming requests to specific micro-services based on the hostname provided in the HTTP request. This capability allows hosts to host multiple micro-services on any domain or IP address while maintaining a logical separation between them. Here's how host-based routing works and why it's advantageous in micro-services architectures on Halodoc.

- Multi-Service Hosting: Host-based routing allows you to host multiple micro-services on a single domain or IP address, differentiating them by hostname. for example: we can route the request to one micro-service

service-a.example.comto another micro-serviceservice-b.example.com. - Logical Separation: By using different hostnames, we can create a clear separation between micro-services, making it easier to manage and scale them individually. This separation is especially valuable when different teams are responsible for different micro-services.

Best Practices for Host-Based Routing:

- Clear Naming Conventions: Maintain a clear and consistent naming convention for hostnames to ensure easy management and avoid confusion.

- DNS Configuration: Ensure that the DNS records for your host-based routes are correctly set up to point to your ALB's DNS name.

- Monitoring: Implement robust monitoring and alerting for host-based routes to detect any issues promptly.

- Backup and Rollback: When deploying new versions of micro-services, have a rollback plan in place and ensure that the ALB's host-based routing can be easily reverted if issues arise.

- SSL Certificate Matching Hostname/Domain Name: Ensure that SSL certificates are correctly matched to the hostname or domain name specified in ingress resources. This means that the Common Name (CN) or Subject Alternative Name (SAN) of the SSL certificate should align with the hostname or domain names defined in ingress rules.

Implementation using common load balancer in Halodoc

As part of cost optimisation initiatives, we've implemented a common strategy for load balancing in Kubernetes. This strategy makes use of Kubernetes ingress annotations to streamline the configuration and management of a single load balancer across various namespaces

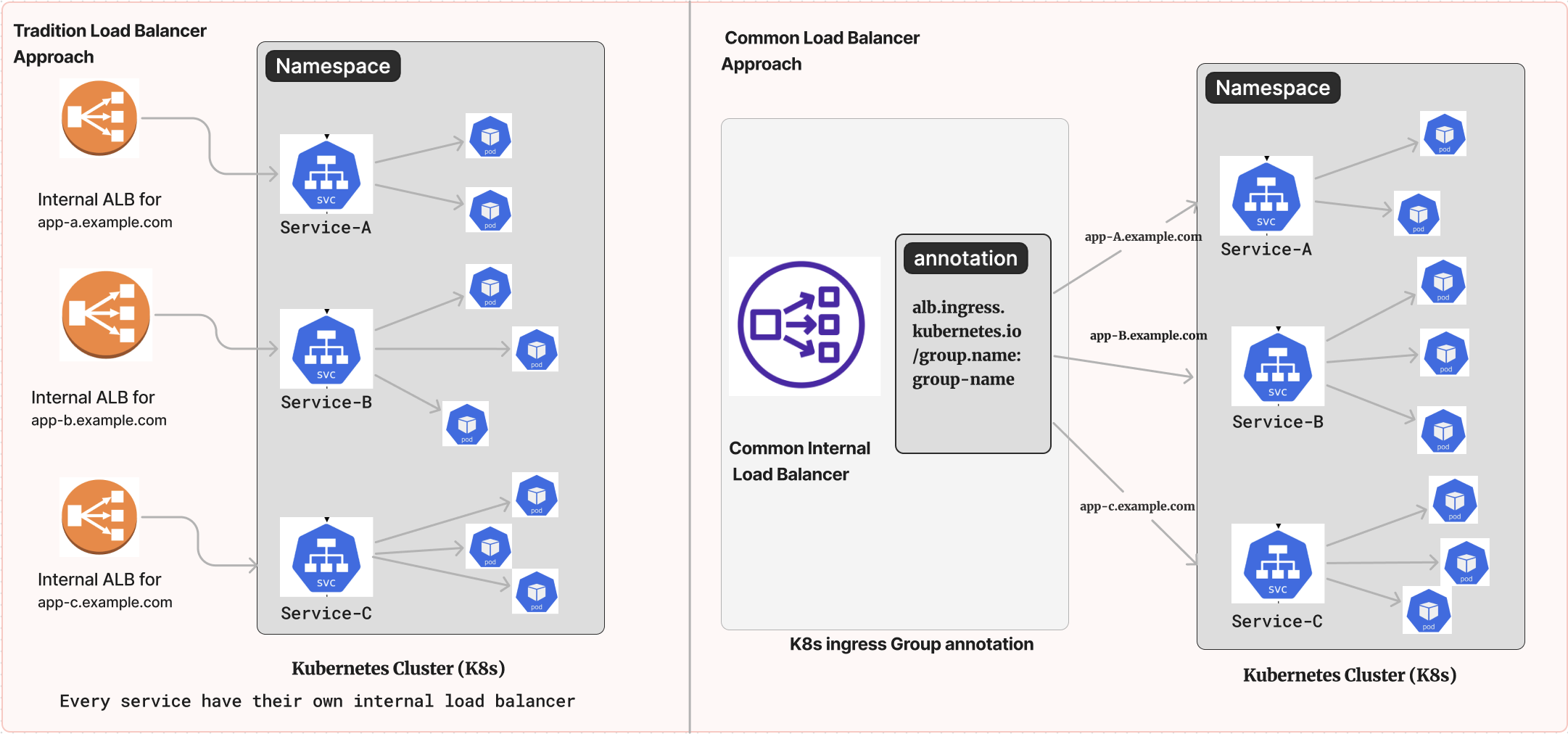

In the traditional approach, each micro-services has its own internal ingress resource configuration, specifying how traffic should be routed within that micro-services. This configuration can be entirely independent, meaning that each micro-service has its load balancing settings. This approach works well when you want complete isolation and autonomy for each micro-service, but it can lead to resource duplication and increased management overhead.

On the other hand, the K8s ingress Group annotation approach allows us to create a single load balancer that spans across multiple namespaces. This load balancer uses a group annotation to define shared rules and settings. While each micro-services can still have its ingress resources, they inherit or reference the settings defined by the group annotation. This promotes resource consolidation, cost efficiency, and centralised management. It still allows individual micro-services to define specific routing and configuration where needed.

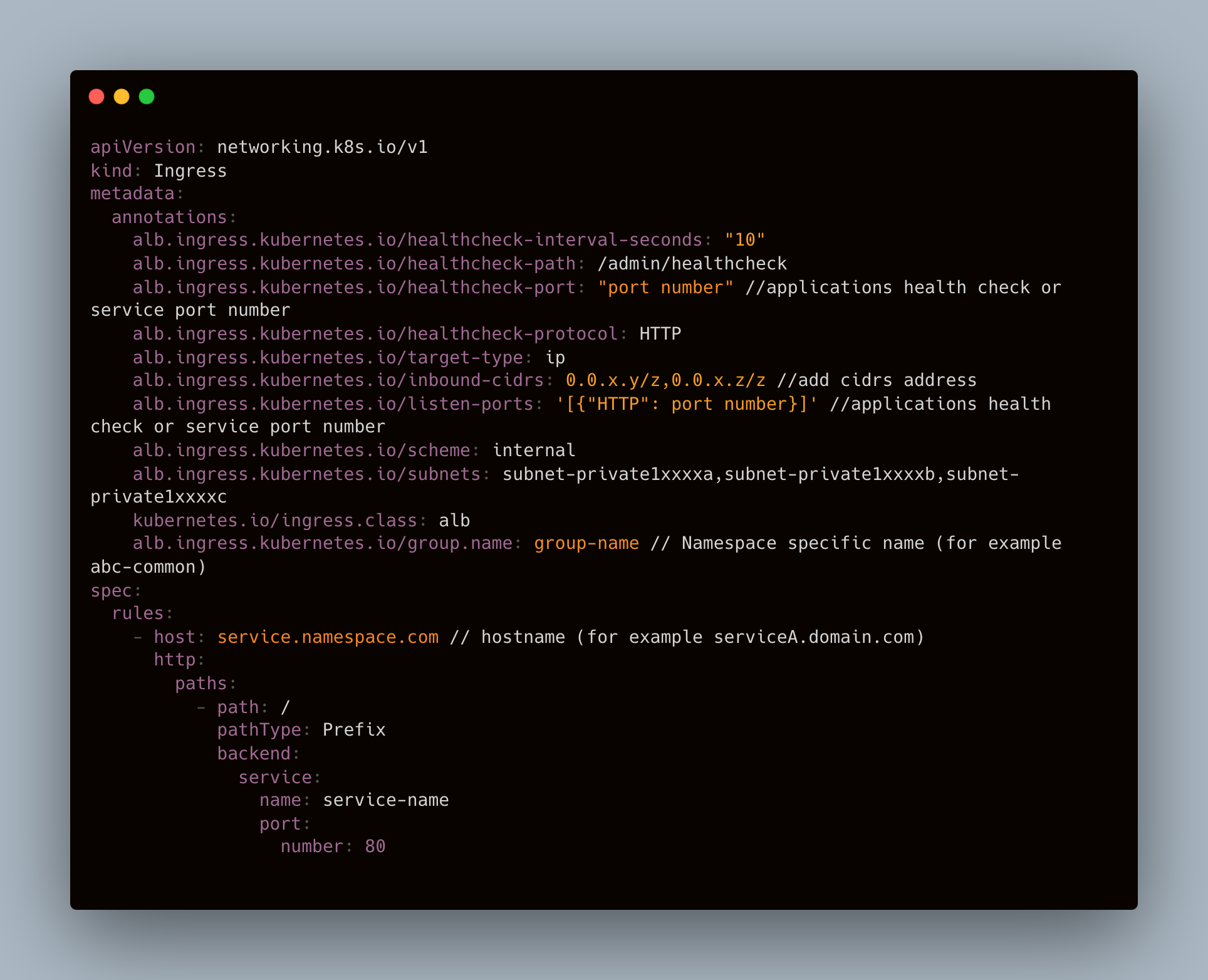

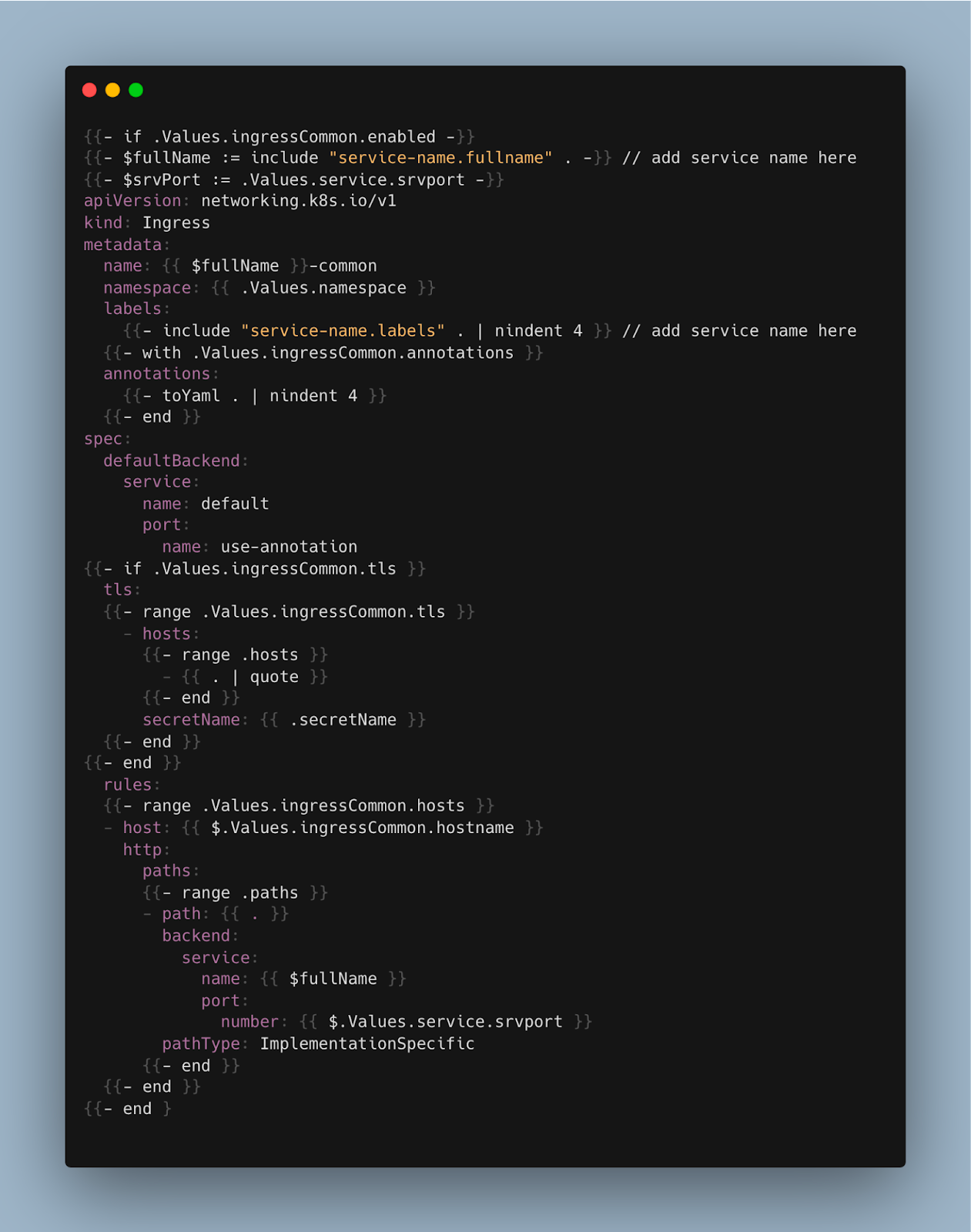

To maintain separate ingress templates for each micro-service while using a common load balancer approach, we can leverage Kubernetes ingress resources in combination with a shared Ingress group annotation. Here's an example of how we have achieved this.

The ingress annotation alb.ingress.kubernetes.io/group.name:group-name with the value "group-name" typically refers to a way of grouping or categorising ingress resources using the AWS ALB in Kubernetes. The "group-name" is a user-defined name that we can assign to our ingress resources to group them together logically. This grouping can be useful for various purposes, such as: Access control, Resource Organisation, Load Balancer configuration.

How we have optimised the cost with the common group ALB approach in Halodoc

- Check all the dependency for the service on different AWS services and list all the resources having the endpoint of that service.

- Keep at least one curl request ready for the service moving to common ALB.

- Add above mentioned common ingress group annotations on values.yaml file in the respective service helm-charts.

- Create a new ingress template with manifest configuration which supports host based routing.

- Perform helm upgrade so that new common ingress is created.

- Create a specified route53 entry as mentioned in values.yaml file of the service.

- Check if the curl request for that service is giving expected results.

- Delete the old load balancer after verifying the service is working properly (once the load balancer is deleted, we cannot get the new load balancer with same endpoint).

Conclusion:

At Halodoc, our journey into the world of micro-services and cloud architecture has been driven by a commitment to efficiency, cost-effectiveness, and seamless user experiences. Leveraging AWS Kubernetes, Application Load Balancers (ALB) with host-based routing, we've achieved a powerful balance between scalability and control.

By combining services based on their categories and distributing the load across namespaces, we've successfully reduced the number of load balancers from 140 to just 30. This consolidation of services allows us to efficiently use a single load balancer for multiple micro-services, resulting in a cost-saving of $3000 per month and greatly contributing to our cost optimization efforts. It has streamlined our system and made it more efficient, while also promoting clear separations and supporting agile deployment strategies.

As a result of our experience, I recommend companies to utilise host-based routing for enhancing efficiency, cost reduction, and user-centric services through the use of ALB in a Kubernetes (K8s) environment. As we move forward, our dedication to delivering top-notch healthcare solutions in the digital age remains unwavering. We will continue to explore innovative solutions and best practices, ensuring that Halodoc's services remain efficient, cost-effective, and user-centric.

References

- https://docs.aws.amazon.com/elasticloadbalancing/

- https://kubernetes-sigs.github.io/aws-load-balancer-controller/v2.2/guide/ingress/annotations/

Join Us

Scalability, reliability and maintainability are the three pillars that govern what we build at Halodoc Tech. We are actively looking for engineers at all levels and if solving hard problems with challenging requirements is your forte, please reach out to us with your resume at careers.india@halodoc.com.

About Halodoc:

Halodoc is the number 1 all around Healthcare application in Indonesia. Our mission is to simplify and bring quality healthcare across Indonesia, from Sabang to Merauke. We connect 20,000+ doctors with patients in need through our Tele-consultation service. We partner with 3500+ pharmacies in 100+ cities to bring medicine to your doorstep. We've also partnered with Indonesia's largest lab provider to provide lab home services, and to top it off we have recently launched a premium appointment service that partners with 500+ hospitals that allow patients to book a doctor appointment inside our application. We are extremely fortunate to be trusted by our investors, such as the Bill & Melinda Gates Foundation, Singtel, UOB Ventures, Allianz, GoJek, Astra, Temasek, and many more. We recently closed our Series D round and In total have raised around USD$100+ million for our mission. Our team works tirelessly to make sure that we create the best healthcare solution personalised for all of our patient's needs, and are continuously on a path to simplify healthcare for Indonesia.